MQL5 Wizard techniques you should know (Part 04): Linear Discriminant Analysis

Todays trader is a philomath who is almost always looking up new ideas, trying them out, choosing to modify them or discard them; an exploratory process that should cost a fair amount of diligence. These series of articles will proposition that the MQL5 wizard should be a mainstay for traders in this effort.

Neural Networks in Trading: Integrating Chaos Theory into Time Series Forecasting (Attraos)

The Attraos framework integrates chaos theory into long-term time series forecasting, treating them as projections of multidimensional chaotic dynamic systems. Exploiting attractor invariance, the model uses phase space reconstruction and dynamic multi-resolution memory to preserve historical structures.

Neural networks made easy (Part 75): Improving the performance of trajectory prediction models

The models we create are becoming larger and more complex. This increases the costs of not only their training as well as operation. However, the time required to make a decision is often critical. In this regard, let us consider methods for optimizing model performance without loss of quality.

Build Self Optimizing Expert Advisors With MQL5 And Python (Part II): Tuning Deep Neural Networks

Machine learning models come with various adjustable parameters. In this series of articles, we will explore how to customize your AI models to fit your specific market using the SciPy library.

Population optimization algorithms: Shuffled Frog-Leaping algorithm (SFL)

The article presents a detailed description of the shuffled frog-leaping (SFL) algorithm and its capabilities in solving optimization problems. The SFL algorithm is inspired by the behavior of frogs in their natural environment and offers a new approach to function optimization. The SFL algorithm is an efficient and flexible tool capable of processing a variety of data types and achieving optimal solutions.

Trading Insights Through Volume: Trend Confirmation

The Enhanced Trend Confirmation Technique combines price action, volume analysis, and machine learning to identify genuine market movements. It requires both price breakouts and volume surges (50% above average) for trade validation, while using an LSTM neural network for additional confirmation. The system employs ATR-based position sizing and dynamic risk management, making it adaptable to various market conditions while filtering out false signals.

Neural networks made easy (Part 17): Dimensionality reduction

In this part we continue discussing Artificial Intelligence models. Namely, we study unsupervised learning algorithms. We have already discussed one of the clustering algorithms. In this article, I am sharing a variant of solving problems related to dimensionality reduction.

Data Science and ML (Part 26): The Ultimate Battle in Time Series Forecasting — LSTM vs GRU Neural Networks

In the previous article, we discussed a simple RNN which despite its inability to understand long-term dependencies in the data, was able to make a profitable strategy. In this article, we are discussing both the Long-Short Term Memory(LSTM) and the Gated Recurrent Unit(GRU). These two were introduced to overcome the shortcomings of a simple RNN and to outsmart it.

Population optimization algorithms: ElectroMagnetism-like algorithm (ЕМ)

The article describes the principles, methods and possibilities of using the Electromagnetic Algorithm in various optimization problems. The EM algorithm is an efficient optimization tool capable of working with large amounts of data and multidimensional functions.

MQL5 Wizard Techniques you should know (Part 80): Using Patterns of Ichimoku and the ADX-Wilder with TD3 Reinforcement Learning

This article follows up ‘Part-74’, where we examined the pairing of Ichimoku and the ADX under a Supervised Learning framework, by moving our focus to Reinforcement Learning. Ichimoku and ADX form a complementary combination of support/resistance mapping and trend strength spotting. In this installment, we indulge in how the Twin Delayed Deep Deterministic Policy Gradient (TD3) algorithm can be used with this indicator set. As with earlier parts of the series, the implementation is carried out in a custom signal class designed for integration with the MQL5 Wizard, which facilitates seamless Expert Advisor assembly.

Wrapping ONNX models in classes

Object-oriented programming enables creation of a more compact code that is easy to read and modify. Here we will have a look at the example for three ONNX models.

Experiments with neural networks (Part 7): Passing indicators

Examples of passing indicators to a perceptron. The article describes general concepts and showcases the simplest ready-made Expert Advisor followed by the results of its optimization and forward test.

Example of Auto Optimized Take Profits and Indicator Parameters with SMA and EMA

This article presents a sophisticated Expert Advisor for forex trading, combining machine learning with technical analysis. It focuses on trading Apple stock, featuring adaptive optimization, risk management, and multiple strategies. Backtesting shows promising results with high profitability but also significant drawdowns, indicating potential for further refinement.

Integrate Your Own LLM into EA (Part 5): Develop and Test Trading Strategy with LLMs (III) – Adapter-Tuning

With the rapid development of artificial intelligence today, language models (LLMs) are an important part of artificial intelligence, so we should think about how to integrate powerful LLMs into our algorithmic trading. For most people, it is difficult to fine-tune these powerful models according to their needs, deploy them locally, and then apply them to algorithmic trading. This series of articles will take a step-by-step approach to achieve this goal.

Analyzing all price movement options on the IBM quantum computer

We will use a quantum computer from IBM to discover all price movement options. Sounds like science fiction? Welcome to the world of quantum computing for trading!

Integrating ML models with the Strategy Tester (Conclusion): Implementing a regression model for price prediction

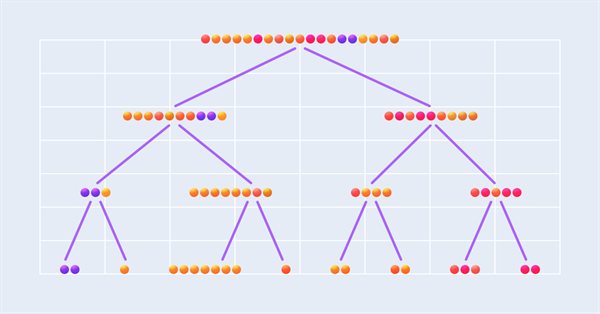

This article describes the implementation of a regression model based on a decision tree. The model should predict prices of financial assets. We have already prepared the data, trained and evaluated the model, as well as adjusted and optimized it. However, it is important to note that this model is intended for study purposes only and should not be used in real trading.

Expert Advisor based on the universal MLP approximator

The article presents a simple and accessible way to use a neural network in a trading EA that does not require deep knowledge of machine learning. The method eliminates the target function normalization, as well as overcomes "weight explosion" and "network stall" issues offering intuitive training and visual control of the results.

Neural networks made easy (Part 58): Decision Transformer (DT)

We continue to explore reinforcement learning methods. In this article, I will focus on a slightly different algorithm that considers the Agent’s policy in the paradigm of constructing a sequence of actions.

Overcoming ONNX Integration Challenges

ONNX is a great tool for integrating complex AI code between different platforms, it is a great tool that comes with some challenges that one must address to get the most out of it, In this article we discuss the common issues you might face and how to mitigate them.

Neural networks made easy (Part 23): Building a tool for Transfer Learning

In this series of articles, we have already mentioned Transfer Learning more than once. However, this was only mentioning. in this article, I suggest filling this gap and taking a closer look at Transfer Learning.

Non-linear regression models on the stock exchange

Non-linear regression models on the stock exchange: Is it possible to predict financial markets? Let's consider creating a model for forecasting prices for EURUSD, and make two robots based on it - in Python and MQL5.

Neural networks made easy (Part 73): AutoBots for predicting price movements

We continue to discuss algorithms for training trajectory prediction models. In this article, we will get acquainted with a method called "AutoBots".

Integrate Your Own LLM into EA (Part 2): Example of Environment Deployment

With the rapid development of artificial intelligence today, language models (LLMs) are an important part of artificial intelligence, so we should think about how to integrate powerful LLMs into our algorithmic trading. For most people, it is difficult to fine-tune these powerful models according to their needs, deploy them locally, and then apply them to algorithmic trading. This series of articles will take a step-by-step approach to achieve this goal.

Data label for timeseries mining (Part 2):Make datasets with trend markers using Python

This series of articles introduces several time series labeling methods, which can create data that meets most artificial intelligence models, and targeted data labeling according to needs can make the trained artificial intelligence model more in line with the expected design, improve the accuracy of our model, and even help the model make a qualitative leap!

Data Science and Machine Learning (Part 25): Forex Timeseries Forecasting Using a Recurrent Neural Network (RNN)

Recurrent neural networks (RNNs) excel at leveraging past information to predict future events. Their remarkable predictive capabilities have been applied across various domains with great success. In this article, we will deploy RNN models to predict trends in the forex market, demonstrating their potential to enhance forecasting accuracy in forex trading.

MetaTrader 5 Machine Learning Blueprint (Part 8): Bayesian Hyperparameter Optimization with Purged Cross-Validation and Trial Pruning

GridSearchCV and RandomizedSearchCV share a fundamental limitation in financial ML: each trial is independent, so search quality does not improve with additional compute. This article integrates Optuna — using the Tree-structured Parzen Estimator — with PurgedKFold cross-validation, HyperbandPruner early stopping, and a dual-weight convention that separates training weights from evaluation weights. The result is a five-component system: an objective function with fold-level pruning, a suggestion layer that optimizes the weighting scheme jointly with model hyperparameters, a financially-calibrated pruner, a resumable SQLite-backed orchestrator, and a converter to scikit-learn cv_results_ format. The article also establishes the boundary — drawn from Timothy Masters — between statistical objectives where directed search is beneficial and financial objectives where it is harmful.

Data Science and ML(Part 30): The Power Couple for Predicting the Stock Market, Convolutional Neural Networks(CNNs) and Recurrent Neural Networks(RNNs)

In this article, We explore the dynamic integration of Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs) in stock market prediction. By leveraging CNNs' ability to extract patterns and RNNs' proficiency in handling sequential data. Let us see how this powerful combination can enhance the accuracy and efficiency of trading algorithms.

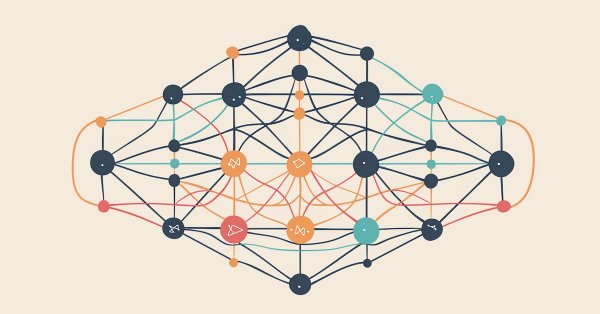

Neuro-symbolic systems in algorithmic trading: Combining symbolic rules and neural networks

The article describes the experience of developing a hybrid trading system that combines classical technical analysis with neural networks. The author provides a detailed analysis of the system architecture from basic pattern analysis and neural network structure to the mechanisms behind trading decisions, and shares real code and practical observations.

William Gann methods (Part III): Does Astrology Work?

Do the positions of planets and stars affect financial markets? Let's arm ourselves with statistics and big data, and embark on an exciting journey into the world where stars and stock charts intersect.

Neural Networks Made Easy (Part 88): Time-Series Dense Encoder (TiDE)

In an attempt to obtain the most accurate forecasts, researchers often complicate forecasting models. Which in turn leads to increased model training and maintenance costs. Is such an increase always justified? This article introduces an algorithm that uses the simplicity and speed of linear models and demonstrates results on par with the best models with a more complex architecture.

Neural networks made easy (Part 20): Autoencoders

We continue to study unsupervised learning algorithms. Some readers might have questions regarding the relevance of recent publications to the topic of neural networks. In this new article, we get back to studying neural networks.

Neural Networks in Trading: An Ensemble of Agents with Attention Mechanisms (Final Part)

In the previous article, we introduced the multi-agent adaptive framework MASAAT, which uses an ensemble of agents to perform cross-analysis of multimodal time series at different data scales. Today we will continue implementing the approaches of this framework in MQL5 and bring this work to a logical conclusion.

Self Optimizing Expert Advisors in MQL5 (Part 17): Ensemble Intelligence

All algorithmic trading strategies are difficult to set up and maintain, regardless of complexity—a challenge shared by beginners and experts alike. This article introduces an ensemble framework where supervised models and human intuition work together to overcome their shared limitations. By aligning a moving average channel strategy with a Ridge Regression model on the same indicators, we achieve centralized control, faster self-correction, and profitability from otherwise unprofitable systems.

Neural Networks in Trading: Optimizing the Transformer for Time Series Forecasting (LSEAttention)

The LSEAttention framework offers improvements to the Transformer architecture. It was designed specifically for long-term multivariate time series forecasting. The approaches proposed by the authors of the method can be applied to solve problems of entropy collapse and learning instability, which are often encountered with vanilla Transformer.

Data Science and ML (Part 42): Forex Time series Forecasting using ARIMA in Python, Everything you need to Know

ARIMA, short for Auto Regressive Integrated Moving Average, is a powerful traditional time series forecasting model. With the ability to detect spikes and fluctuations in a time series data, this model can make accurate predictions on the next values. In this article, we are going to understand what is it, how it operates, what you can do with it when it comes to predicting the next prices in the market with high accuracy and much more.

MQL5 Wizard Techniques you should know (Part 71): Using Patterns of MACD and the OBV

The Moving-Average-Convergence-Divergence (MACD) oscillator and the On-Balance-Volume (OBV) oscillator are another pair of indicators that could be used in conjunction within an MQL5 Expert Advisor. This pairing, as is practice in these article series, is complementary with the MACD affirming trends while OBV checks volume. As usual, we use the MQL5 wizard to build and test any potential these two may possess.

Neural Networks in Trading: Hybrid Graph Sequence Models (GSM++)

Hybrid graph sequence models (GSM++) combine the advantages of different architectures to provide high-fidelity data analysis and optimized computational costs. These models adapt effectively to dynamic market data, improving the presentation and processing of financial information.

Neural networks made easy (Part 53): Reward decomposition

We have already talked more than once about the importance of correctly selecting the reward function, which we use to stimulate the desired behavior of the Agent by adding rewards or penalties for individual actions. But the question remains open about the decryption of our signals by the Agent. In this article, we will talk about reward decomposition in terms of transmitting individual signals to the trained Agent.

Experiments with neural networks (Part 4): Templates

In this article, I will use experimentation and non-standard approaches to develop a profitable trading system and check whether neural networks can be of any help for traders. MetaTrader 5 as a self-sufficient tool for using neural networks in trading. Simple explanation.

Neural networks made easy (Part 66): Exploration problems in offline learning

Models are trained offline using data from a prepared training dataset. While providing certain advantages, its negative side is that information about the environment is greatly compressed to the size of the training dataset. Which, in turn, limits the possibilities of exploration. In this article, we will consider a method that enables the filling of a training dataset with the most diverse data possible.