Scikit-Learn 库中的分类模型及其导出到 ONNX

技术的发展带来了一种构建数据处理算法的全新方法。以前,为了解决每一个特定任务,都需要明确的形式化和相应算法的开发。

在机器学习中,计算机会学习自行找到处理数据的最佳方法。机器学习模型可以成功地解决分类任务(其中有一组固定的类,目标是找到属于每个类的给定特征集的概率)和回归任务(其中目标是基于给定特征集估计目标变量的数值)。可以基于这些基本组件构建更复杂的数据处理模型。

Scikit-learn 库为分类和回归提供了多种工具。具体方法和模型的选择取决于数据的特点,因为不同的方法可能具有不同的有效性,并根据任务提供不同的结果。

在新闻稿 “ONNX Runtime 现已开源”中,声明了 ONNX Runtime 还支持 ONNX-ML 配置文件:

ONNX-ML 配置文件是 ONNX 的一部分,专为机器学习 (ML) 模型设计。它旨在以方便的格式描述和表示各种类型的 ML 模型,例如分类、回归、聚类等,可以在支持 ONNX 的各种平台和环境中使用。ONNX-ML 配置文件简化了机器学习模型的传输、部署和执行,使其更易于访问和移植。

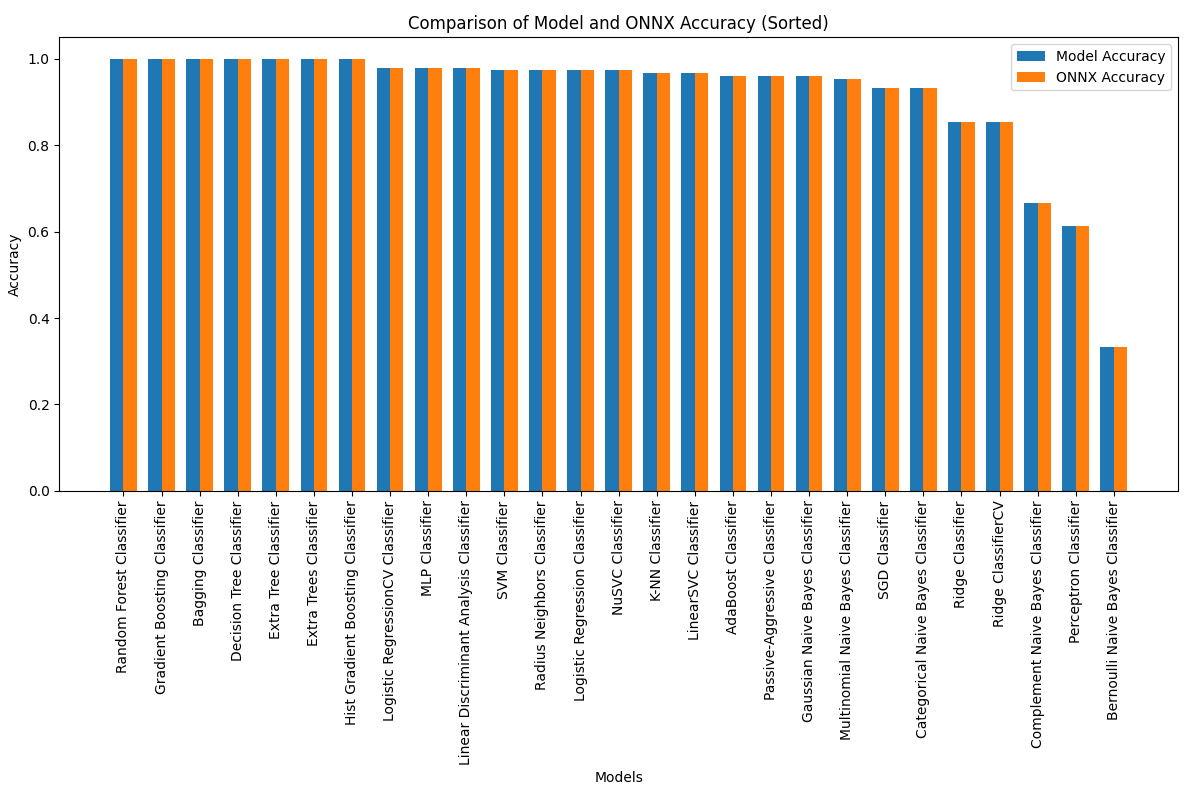

在本文中,我们将探讨 Scikit-learn 包中的所有分类模型在解决 Fisher 鸢尾花分类任务中的应用。我们还将尝试将这些模型转换为 ONNX 格式,并在 MQL5 程序中使用生成的模型。

此外,我们将在完整的鸢尾花数据集上比较原始模型与其 ONNX 版本的准确性。

目录

- 1.费舍尔的鸢尾花

- 2.分类模型

Scikit-learn 分类器列表

模型的不同输出表示 iris.mqh - 2.1.SVC Classifier

2.1.1.创建 SVC Classifier 模型的代码

2.1.2.用于处理 SVC Classifier 模型的 MQL5 代码

2.1.3.SVC Classifier 模型的 ONNX 表示 - 2.2.LinearSVC Classifier

2.2.1.创建 LinearSVC Classifier 模型的代码

2.2.2.用于处理 LinearSVC Classifier 模型的 MQL5 代码

2.2.3.LinearSVC Classifier 模型的 ONNX 表示 - 2.3.NuSVC Classifier

2.3.1.创建 NuSVC Classifier 模型的代码

2.3.2.用于处理 NuSVC Classifier 模型的 MQL5 代码

2.3.3.NuSVC Classifier 模型的 ONNX 表示 - 2.4.Radius Neighbors Classifier

2.4.1.创建 Radius Neighbors Classifier 模型的代码

2.4.2.用于处理 Radius Neighbors Classifier 模型的 MQL5 代码

2.3.3.Radius Neighbors Classifier 模型的 ONNX 表示 - 2.5.Ridge Classifier

2.5.1.创建 Ridge Classifier 模型的代码

2.5.2.用于处理 Ridge Classifier 模型的 MQL5 代码

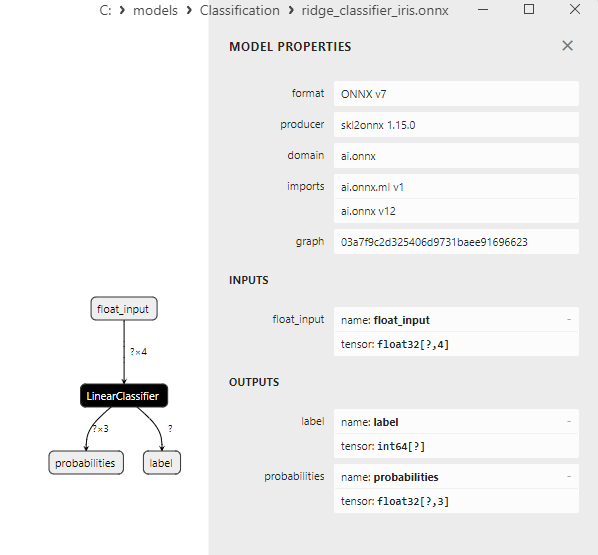

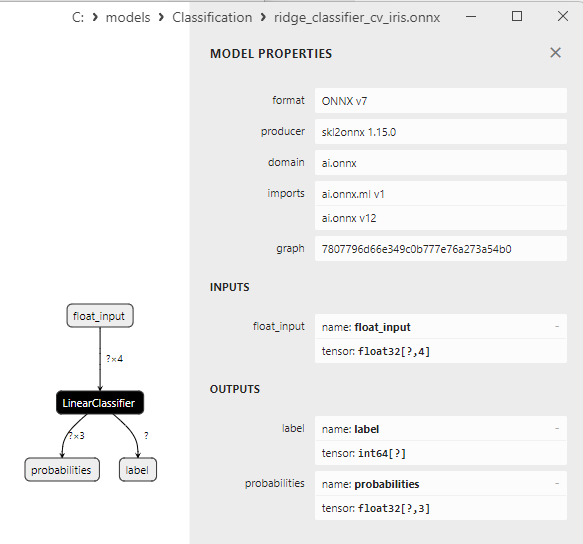

2.5.3.Ridge Classifier 模型的 ONNX 表示 - 2.6.RidgeClassifierCV

2.6.1.创建 Ridge ClassifierCV 模型的代码

2.6.2.用于处理 Ridge ClassifierCV 模型的 MQL5 代码

2.6.3.Ridge ClassifierCV 模型的 ONNX 表示 - 2.7.Random Forest Classifier

2.7.1.创建 Random Forest Classifier 模型的代码

2.7.2.用于处理 Random Forest Classifier 模型的 MQL5 代码

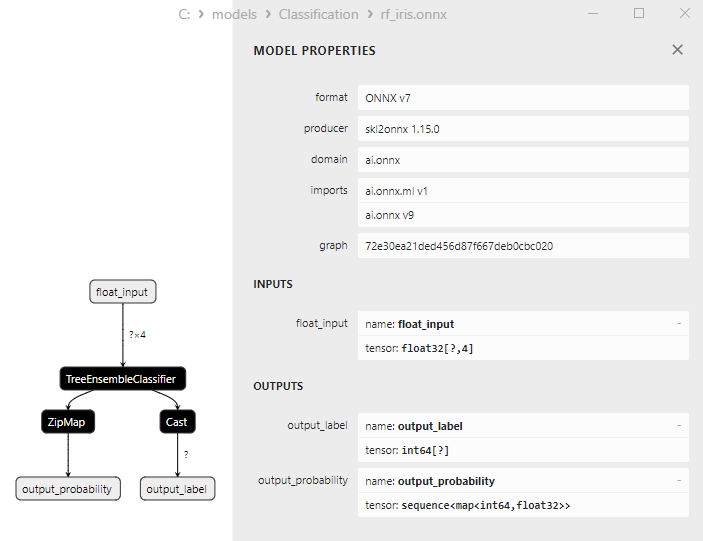

2.7.3.Random Forest Classifier 模型的 ONNX 表示 - 2.8.Gradient Boosting Classifier

2.8.1.创建 Gradient Boosting Classifier 模型的代码

2.8.2.用于处理 Gradient Boosting Classifier 模型的 MQL5 代码

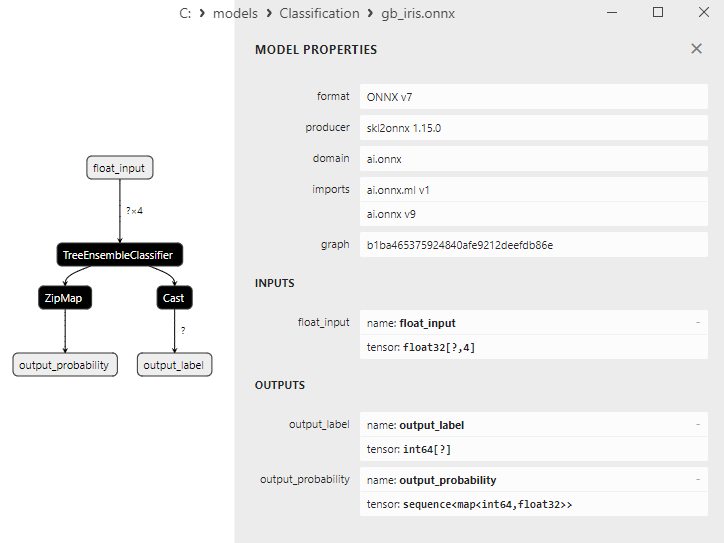

2.8.3.Gradient Boosting Classifier 模型的 ONNX 表示 - 2.9.Adaptive Boosting Classifier

2.9.1.创建 Adaptive Boosting Classifier 模型的代码

2.9.2.用于处理 Adaptive Boosting Classifier 模型的 MQL5 代码

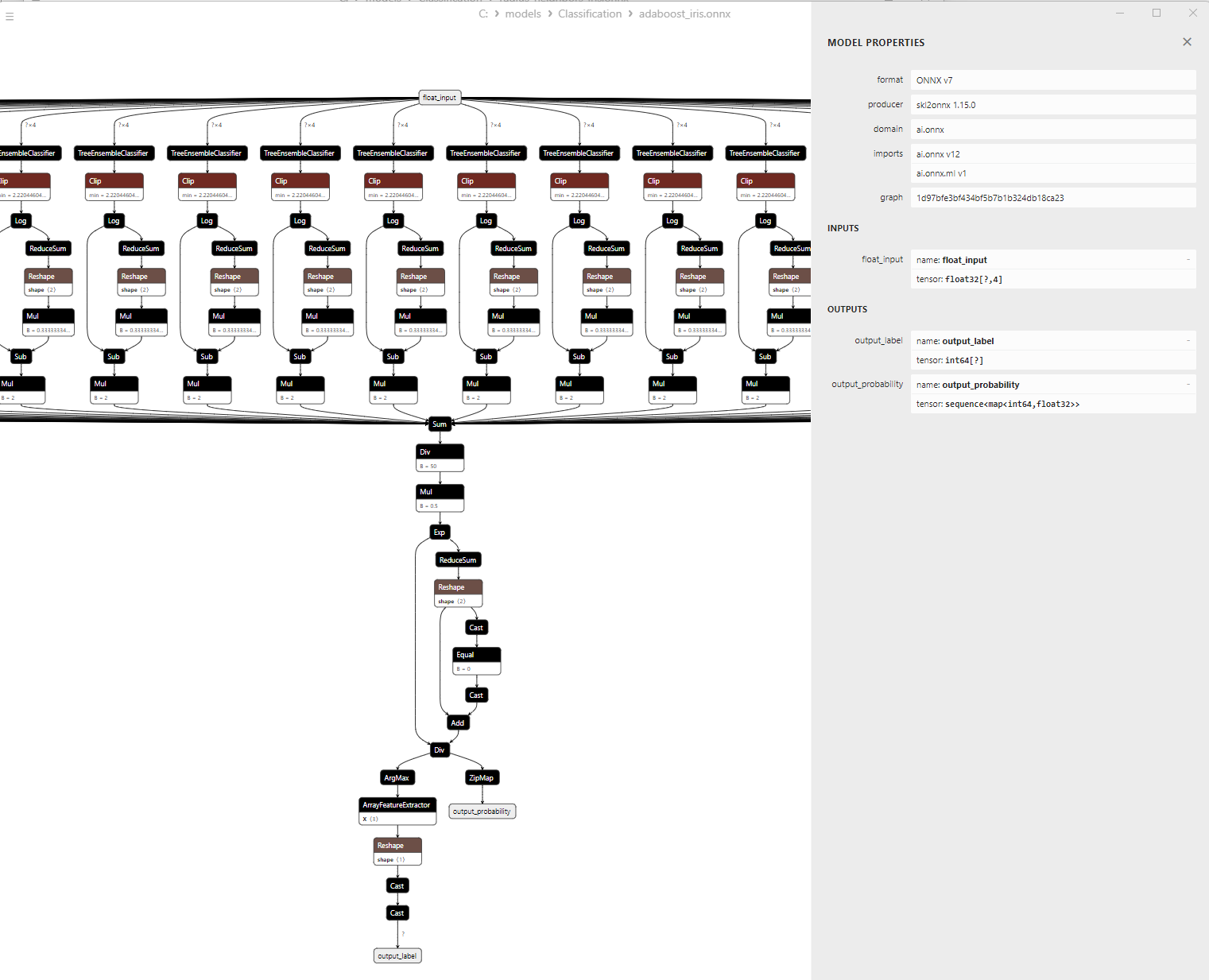

2.9.3.Adaptive Boosting Classifier 模型的 ONNX 表示 - 2.10.Bootstrap Aggregating Classifier

2.10.1.创建 Bootstrap Aggregating Classifier 模型的代码

2.10.2.用于处理 Bootstrap Aggregating Classifier 模型的 MQL5 代码

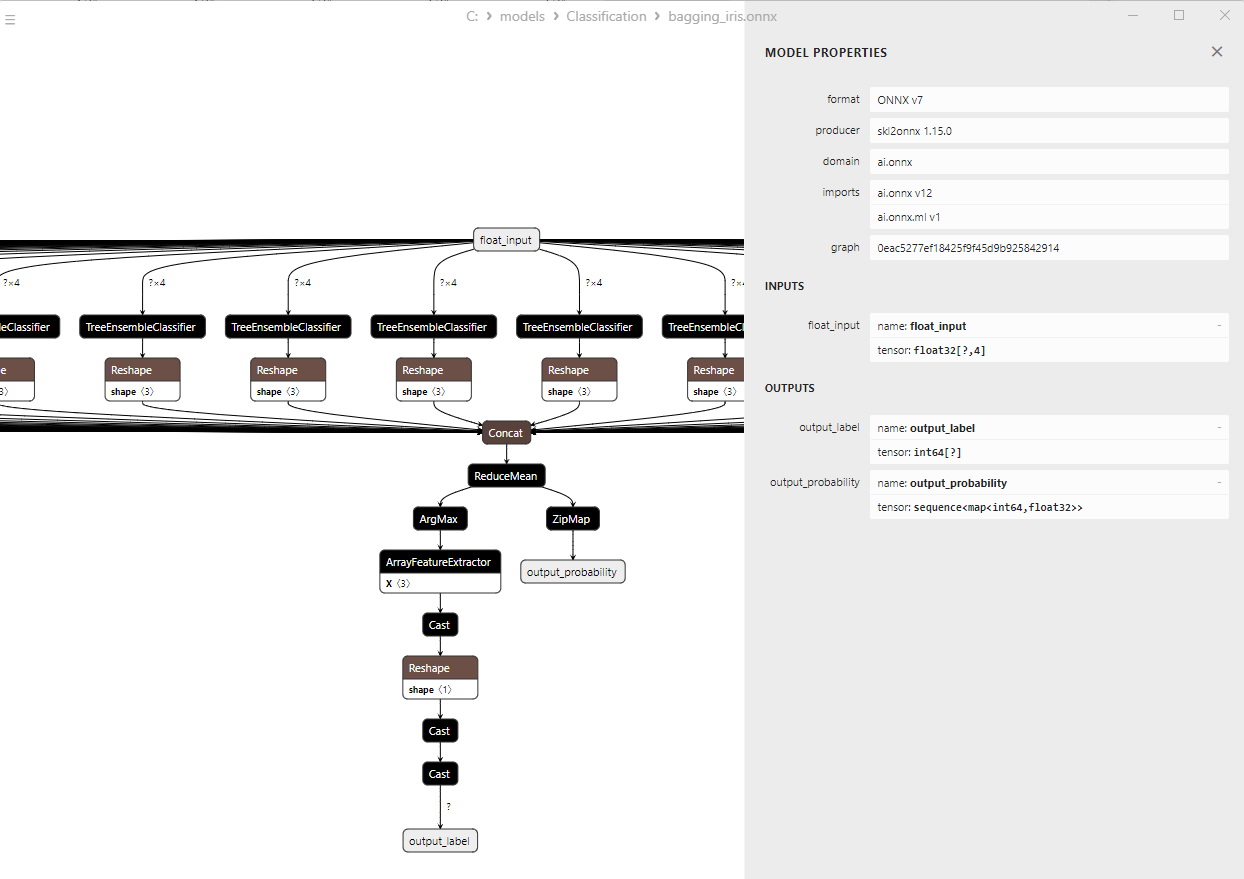

2.10.3.Bootstrap Aggregating Classifier 模型的 ONNX 表示 - 2.11.K-Nearest Neighbors (K-NN) Classifier

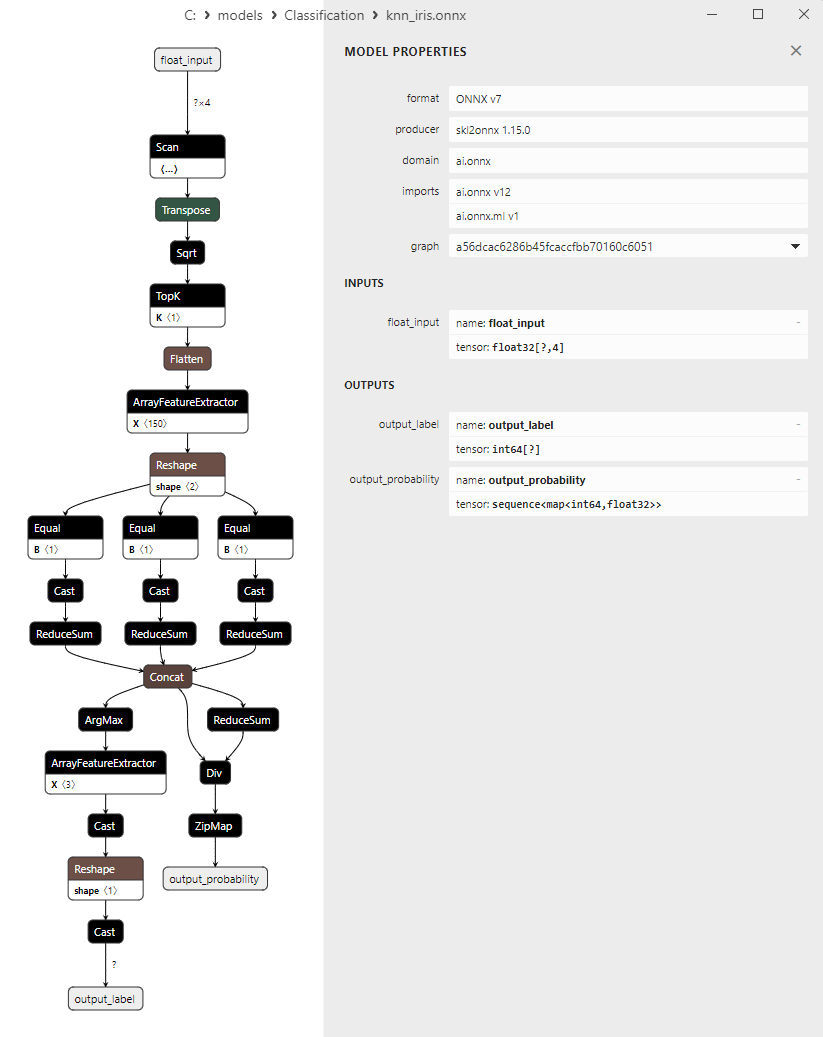

2.11.1.创建 K-Nearest Neighbors (K-NN) Classifier 模型的代码

2.11.2.用于处理 K-Nearest Neighbors (K-NN) Classifier 模型的 MQL5 代码

2.11.3.K-Nearest Neighbors (K-NN) Classifier 模型的 ONNX 表示 - 2.12.Decision Tree Classifier

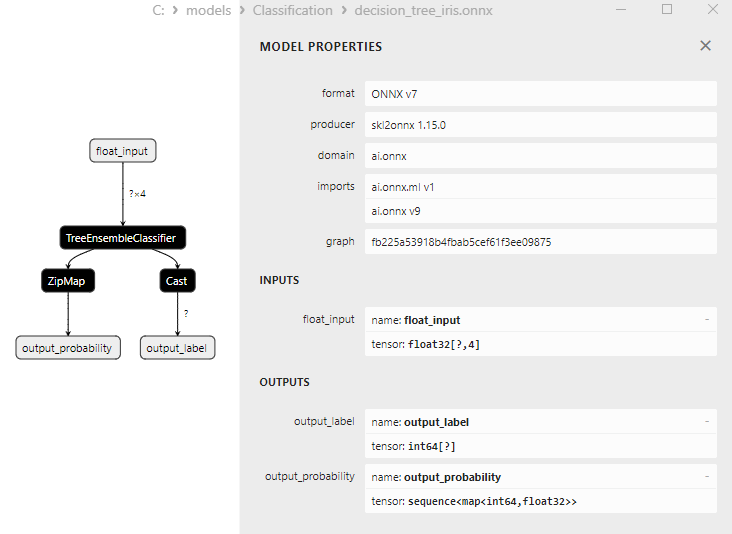

2.12.1.创建 Decision Tree Classifier 模型的代码

2.12.2.用于处理 Decision Tree Classifier 模型的 MQL5 代码

2.12.3.Decision Tree Classifier 模型的 ONNX 表示 - 2.13.Logistic Regression Classifier

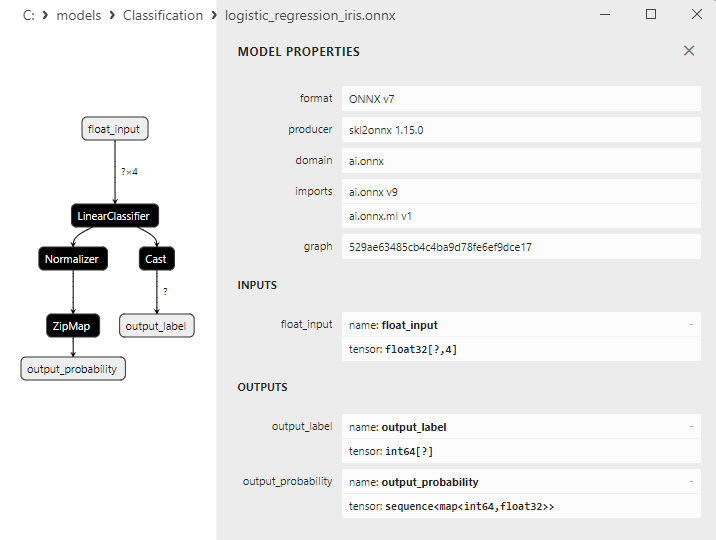

2.13.1.创建 Logistic Regression Classifier 模型的代码

2.13.2.用于处理 Logistic Regression Classifier 模型的 MQL5 代码

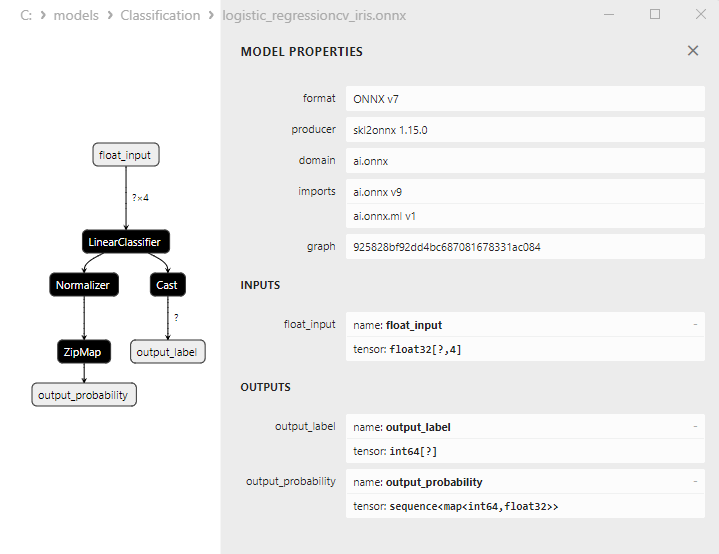

2.13.3.Logistic Regression Classifier 模型的 ONNX 表示 - 2.14.LogisticRegressionCV Classifier

2.14.1.创建 LogisticRegressionCV Classifier 模型的代码

2.14.2.用于处理 LogisticRegressionCV Classifier 模型的 MQL5 代码

2.14.3.LogisticRegressionCV Classifier 模型的 ONNX 表示 - 2.15.Passive-Aggressive (PA) Classifier

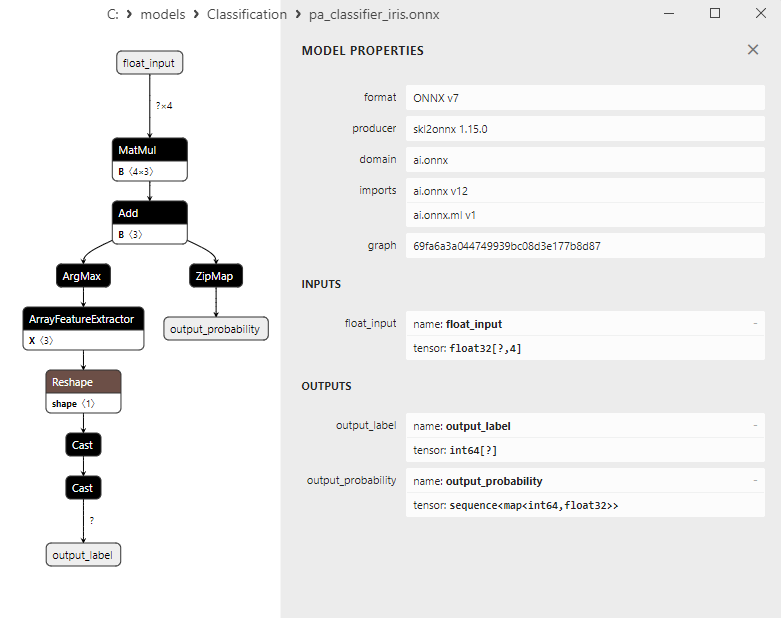

2.15.1.创建 Passive-Aggressive (PA) Classifier 模型的代码

2.15.2.用于处理 Passive-Aggressive (PA) Classifier 模型的 MQL5 代码

2.15.3.Passive-Aggressive (PA) Classifier 模型的 ONNX 表示 - 2.16.Perceptron Classifier

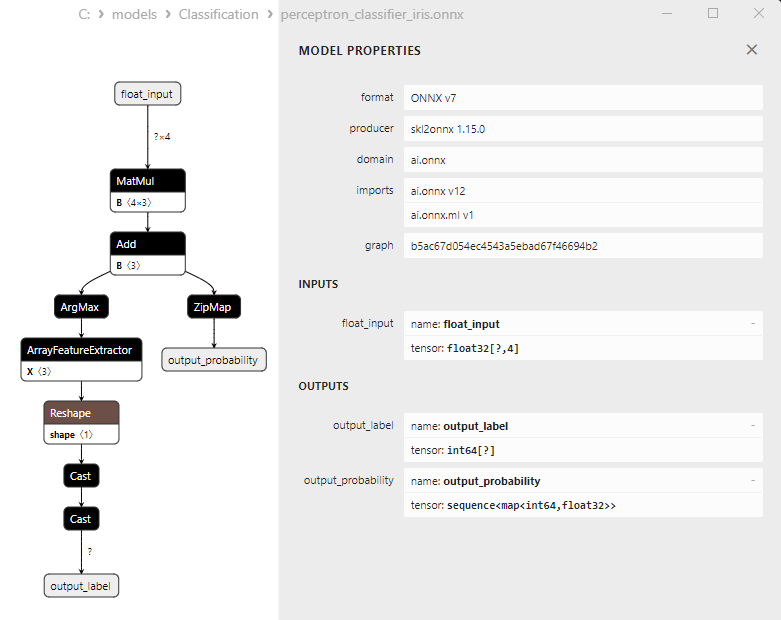

2.16.1.创建 Perceptron Classifier 模型的代码

2.16.2.用于处理 Perceptron Classifier 模型的 MQL5 代码

2.16.3.Perceptron Classifier 模型的 ONNX 表示 - 2.17.Stochastic Gradient Descent Classifier

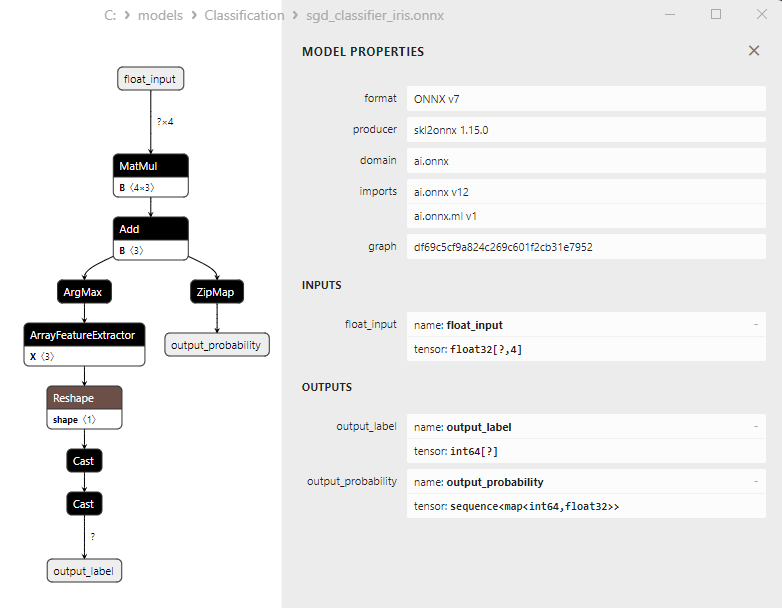

2.17.1.创建 Stochastic Gradient Descent Classifier 模型的代码

2.17.2.用于处理 Stochastic Gradient Descent Classifier 模型的 MQL5 代码

2.17.3.Stochastic Gradient Descent Classifier 模型的 ONNX 表示 - 2.18.Gaussian Naive Bayes (GNB) Classifier

2.18.1.创建 Gaussian Naive Bayes (GNB) Classifier 模型的代码

2.18.2.用于处理 Gaussian Naive Bayes (GNB) Classifier 模型的 MQL5 代码

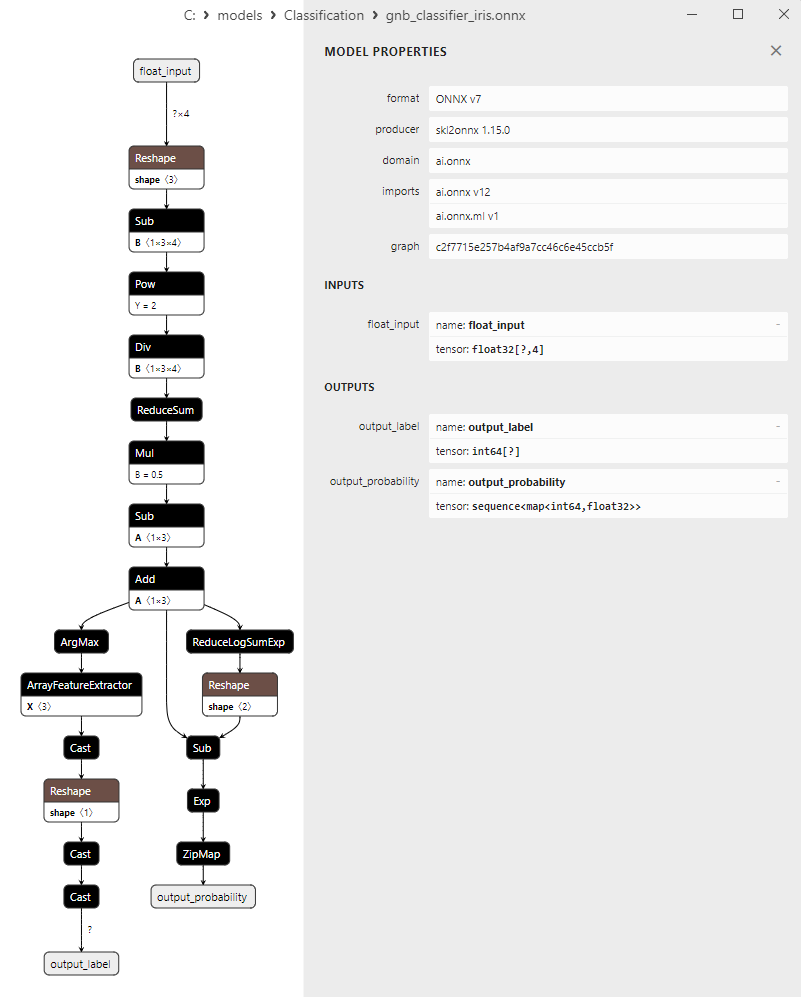

2.18.3.Gaussian Naive Bayes (GNB) Classifier 模型的 ONNX 表示 - 2.19.Multinomial Naive Bayes (MNB) Classifier

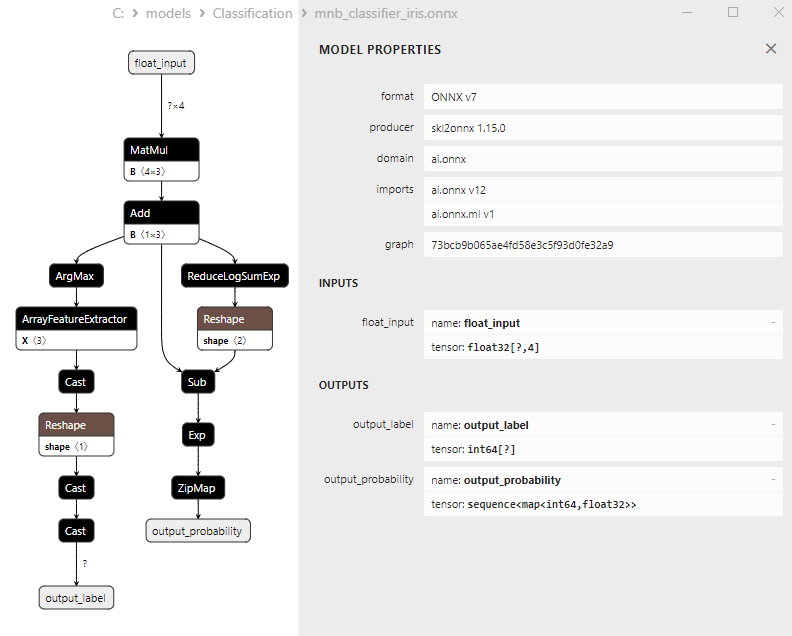

2.19.1.创建 Multinomial Naive Bayes (MNB) Classifier 模型的代码

2.19.2.用于处理 Multinomial Naive Bayes (MNB) Classifier 模型的 MQL5 代码

2.19.3.Multinomial Naive Bayes (MNB) Classifier 模型的 ONNX 表示 - 2.20.Complement Naive Bayes (CNB) Classifier

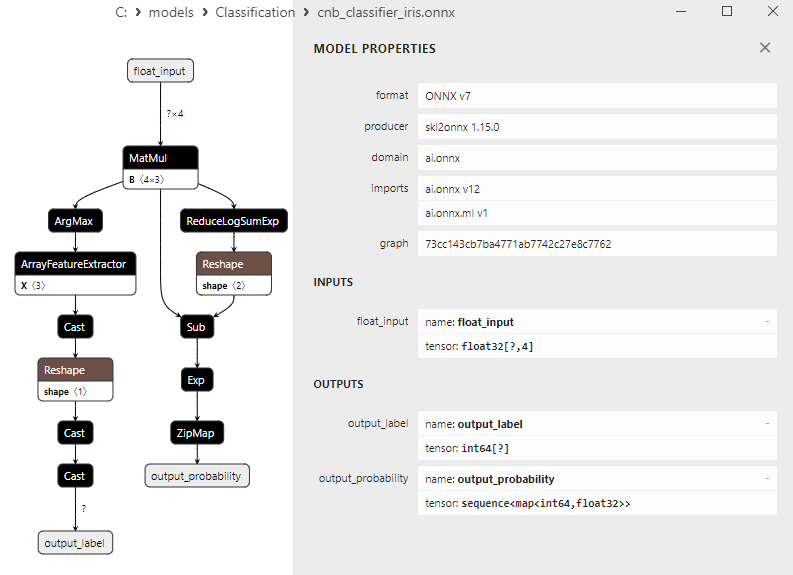

2.20.1.创建 Complement Naive Bayes (CNB) Classifier 模型的代码

2.20.2.用于处理 Complement Naive Bayes (CNB) Classifier 模型的 MQL5 代码

2.20.3.Complement Naive Bayes (CNB) Classifier 模型的 ONNX 表示 - 2.21.Bernoulli Naive Bayes (BNB) Classifier

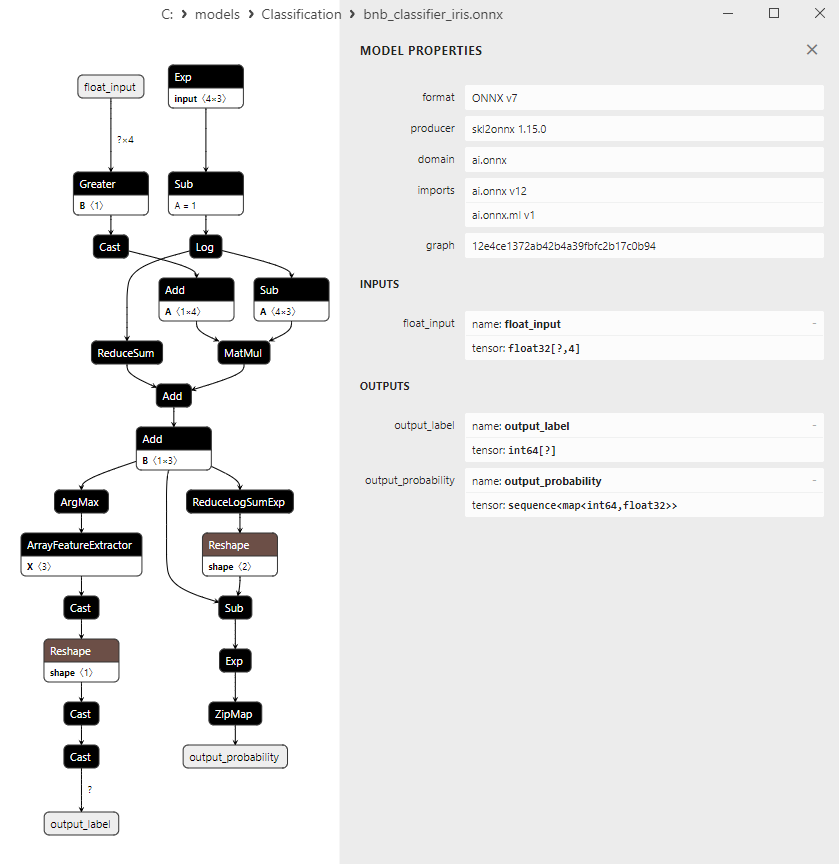

2.21.1.创建 Bernoulli Naive Bayes (BNB) Classifier 模型的代码

2.21.2.用于处理 Bernoulli Naive Bayes (BNB) Classifier 的 MQL5 代码

2.21.3.Bernoulli Naive Bayes (BNB) Classifier 模型的 ONNX 表示 - 2.22.Multilayer Perceptron Classifier

2.22.1.创建 Multilayer Perceptron Classifier 模型的代码

2.22.2.用于处理 Multilayer Perceptron Classifier 模型的 MQL5 代码

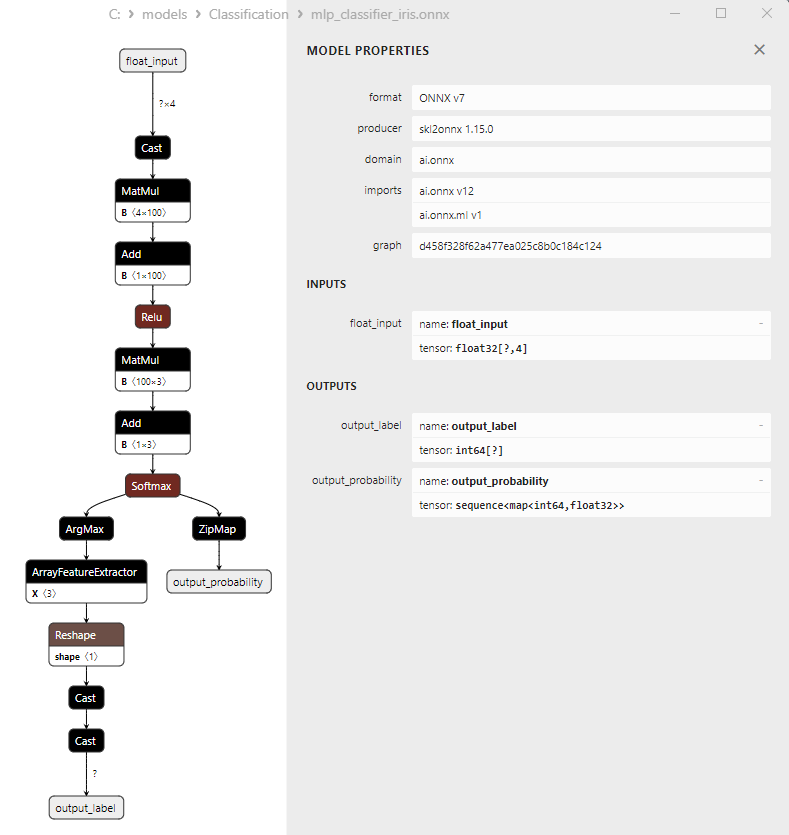

2.22.3.Multilayer Perceptron Classifier 模型的 ONNX 表示 - 2.23.Linear Discriminant Analysis (LDA) Classifier

2.23.1.创建 Linear Discriminant Analysis (LDA) Classifier 模型的代码

2.23.2.用于处理 Linear Discriminant Analysis (LDA) Classifier 模型的 MQL5 代码

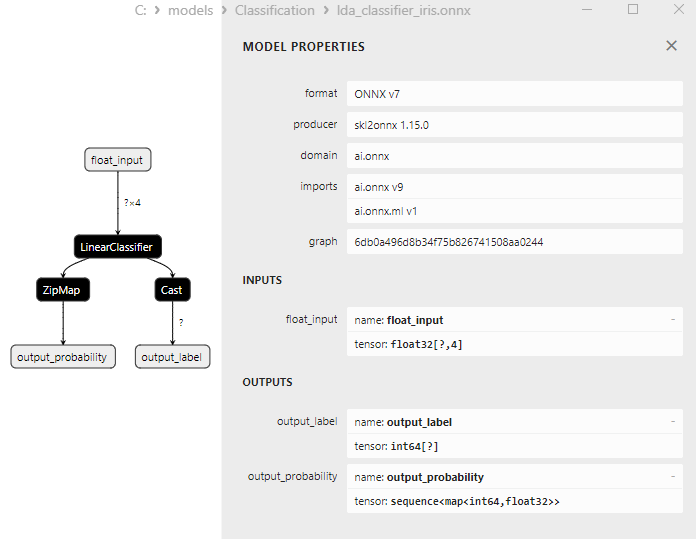

2.23.3.Linear Discriminant Analysis (LDA) Classifier 模型的 ONNX 表示 - 2.24.Hist Gradient Boosting

2.24.1.创建 Histogram-Based Gradient Boosting Classifier 模型的代码

2.24.2.用于处理 Histogram-Based Gradient Boosting Classifier 模型的 MQL5 代码

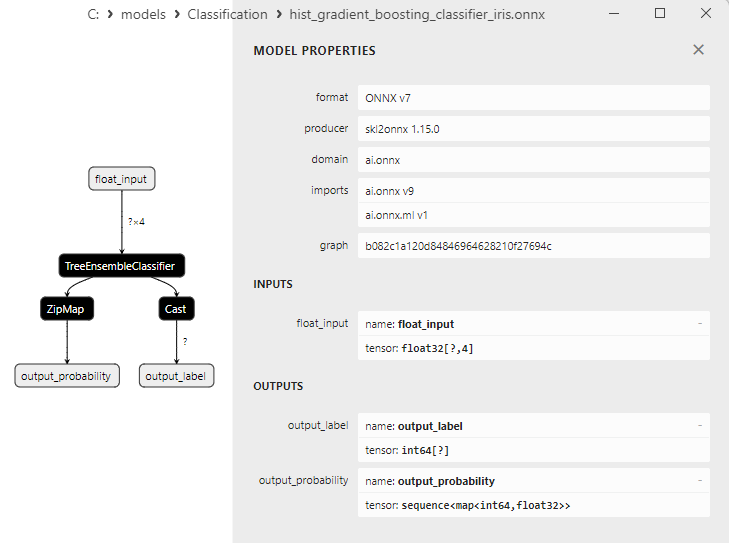

2.24.3.Histogram-Based Gradient Boosting Classifier 模型的 ONNX 表示 - 2.25。CategoricalNB Classifier

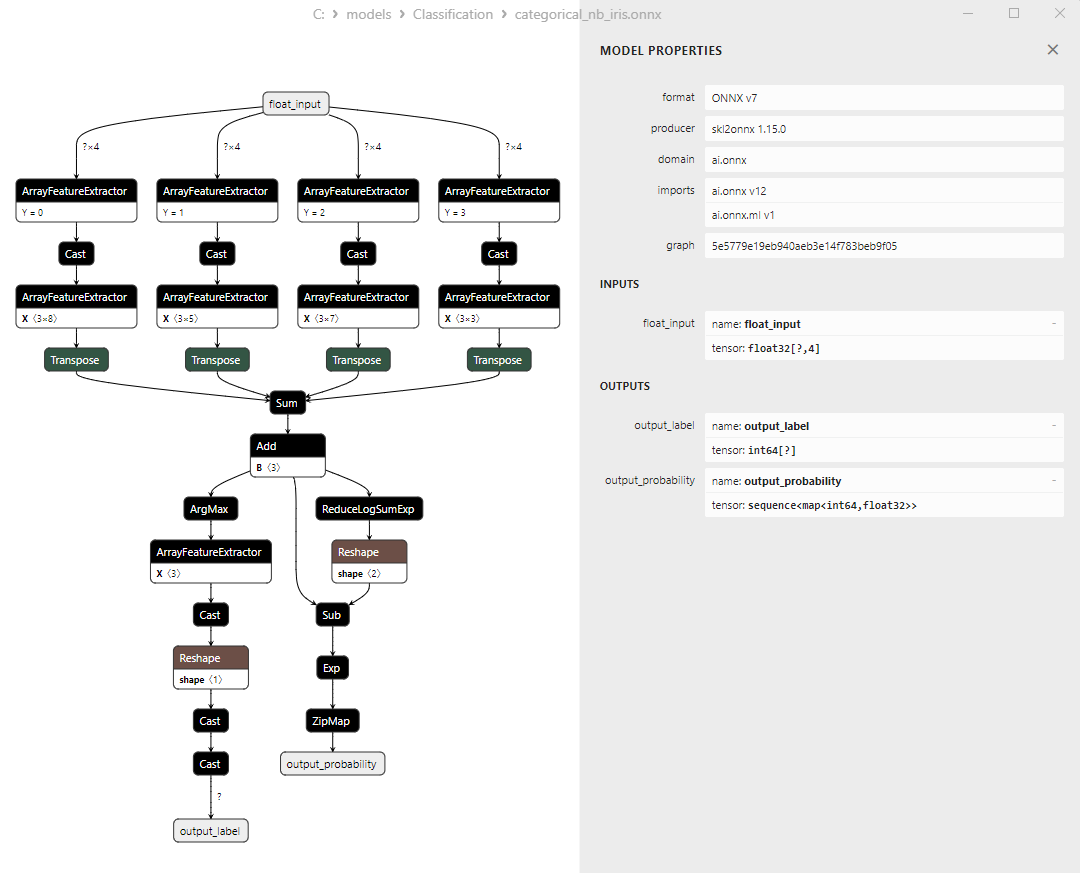

2.25.1.创建 CategoricalNB Classifier 模型的代码

2.25.2.用于处理 CategoricalNB Classifier 模型的 MQL5 代码

2.25.3.CategoricalNB Classifier 模型的 ONNX 表示 - 2.26.ExtraTreeClassifier

2.26.1.创建 ExtraTreeClassifier 模型的代码

2.26.2.用于处理 ExtraTreeClassifier 模型的 MQL5 代码

2.26.3.ExtraTreeClassifier 模型的 ONNX 表示 - 2.27.ExtraTreesClassifier

2.27.1.创建 ExtraTreesClassifier 模型的代码

2.27.2.用于处理 ExtraTreesClassifier 模型的 MQL5 代码

2.27.3.ExtraTreesClassifier 模型的 ONNX 表示 - 2.28.比较所有模型的准确率

2.28.1.计算所有模型并建立准确率比较图表的代码

2.28.2.用于执行所有 ONNX 模型的 MQL5 代码 - 2.29.无法转换为 ONNX 的 Scikit-Learn 分类模型

- 2.29.1.DummyClassifier

2.29.1.1.创建 DummyClassifier 模型的代码 - 2.29.2.GaussianProcessClassifier

2.29.2.1.创建 GaussianProcessClassifier 模型的代码 - 2.29.3.LabelPropagation Classifier

2.29.3.1.创建 LabelPropagationClassifier 模型的代码 - 2.29.4.LabelSpreading Classifier

2.29.4.1.创建 LabelSpreadingClassifier 模型的代码 - 2.29.5.NearestCentroid Classifier

2.29.5.1.创建 NearestCentroid 模型的代码 - 2.29.6.Quadratic Discriminant Analysis Classifier

2.29.6.1.创建 Quadratic Discriminant Analysis 模型的代码 - 结论

1.费舍尔的(Fisher's)鸢尾花

鸢尾花数据集是机器学习领域最著名和应用最广泛的数据集之一。它于 1936 年由统计学家和生物学家 R.A.Fisher 首次提出,从此成为分类任务的经典数据集。

鸢尾花数据集包括三种鸢尾花(山鸢尾、维吉尼亚鸢尾和杂色鸢尾)的萼片和花瓣的测量数据。

图1.山鸢尾

图 2.维吉尼亚鸢尾

图 3.杂色鸢尾

鸢尾花数据集包含 150 个鸢尾花实例,三个品种各有 50 个实例。每个实例有四个数值特征(以厘米为单位):

- 花萼长度

- 花萼宽度

- 花瓣长度

- 花瓣宽度

每个实例还具有相应的类,表示鸢尾花种类(山鸢尾、维吉尼亚鸢尾或杂色鸢尾)。这种分类属性使鸢尾花数据集成为分类和聚类等机器学习任务的理想数据集。

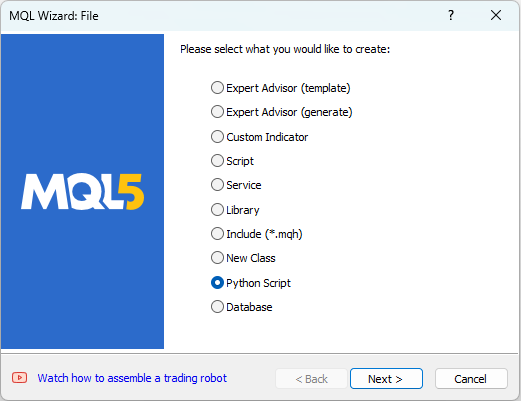

MetaEditor 允许使用 Python 脚本。要创建 Python 脚本,请从 MetaEditor 中的“文件”菜单中选择“新建”,然后会出现一个用于选择要创建对象的对话框(见图 4)。

图 4.在 MQL5 向导中创建 Python 脚本 - 步骤 1

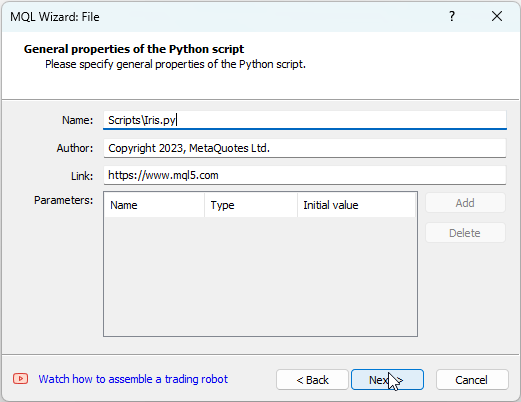

接下来,为脚本提供一个名称,例如“IRIS.py”(见图 5)。

图 5.在 MQL5 向导中创建 Python 脚本 - 步骤 2 - 脚本名称

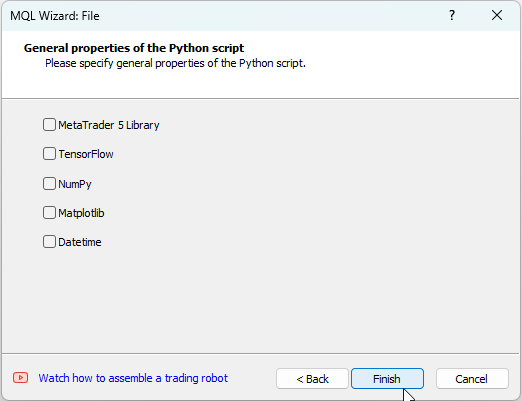

之后,您可以指定将使用哪些库。在我们的例子中,我们将这些字段留空(见图 6)。

图6:在 MQL5 向导中创建 Python 脚本 - 步骤 3

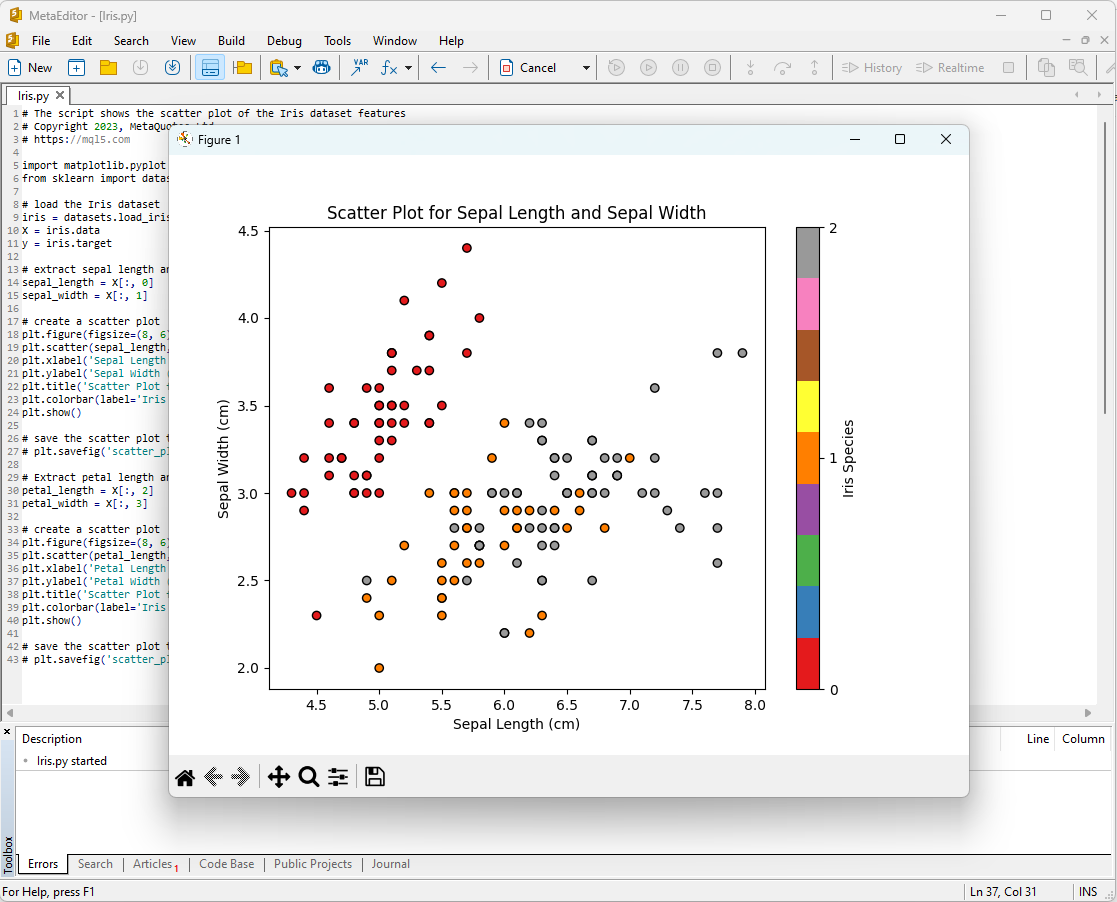

开始分析鸢尾花数据集的一种方法是将数据可视化,图形表示可以让我们更好地理解数据的结构和特征之间的关系。

例如,您可以创建散点图来查看不同种类的鸢尾花在特征空间中的分布情况。

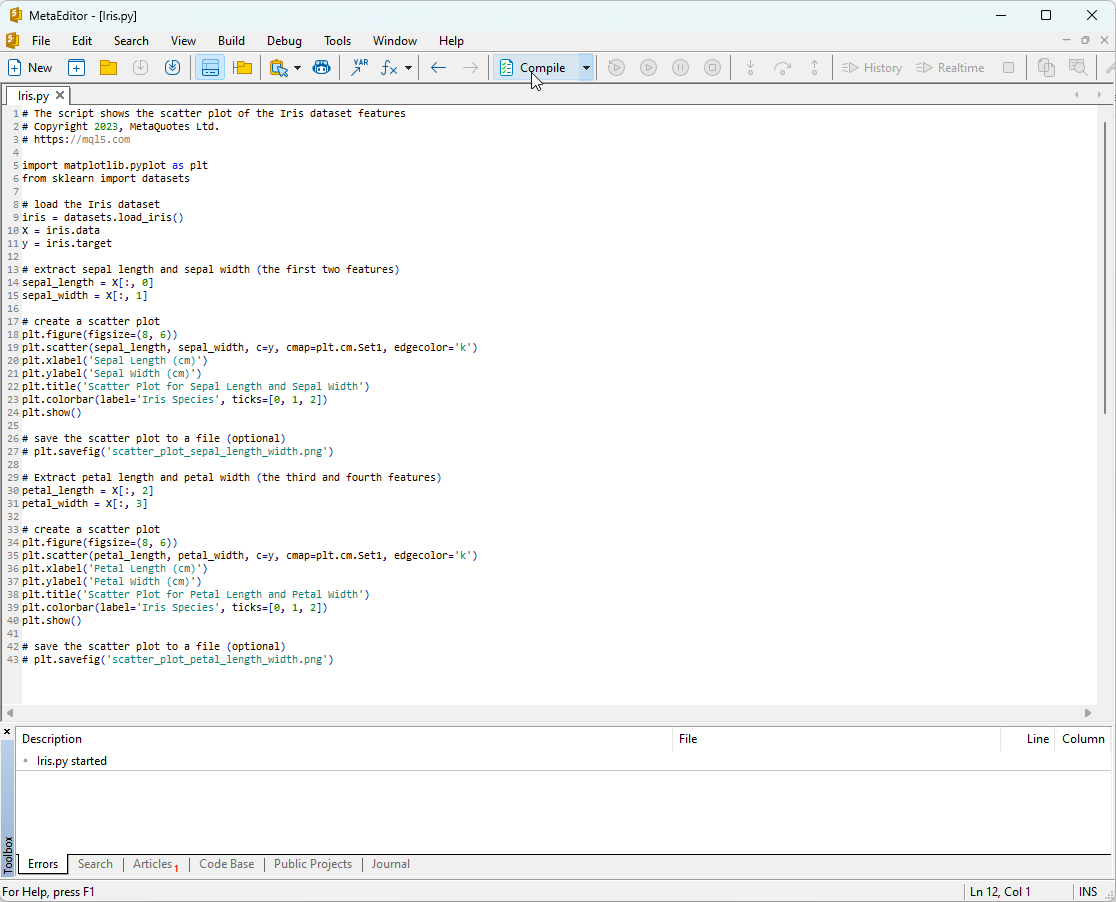

Python脚本代码:

# The script shows the scatter plot of the Iris dataset features # Copyright 2023, MetaQuotes Ltd. # https://mql5.com import matplotlib.pyplot as plt from sklearn import datasets # load the Iris dataset iris = datasets.load_iris() X = iris.data y = iris.target # extract sepal length and sepal width (the first two features) sepal_length = X[:, 0] sepal_width = X[:, 1] # create a scatter plot plt.figure(figsize=(8, 6)) plt.scatter(sepal_length, sepal_width, c=y, cmap=plt.cm.Set1, edgecolor='k') plt.xlabel('Sepal Length (cm)') plt.ylabel('Sepal Width (cm)') plt.title('Scatter Plot for Sepal Length and Sepal Width') plt.colorbar(label='Iris Species', ticks=[0, 1, 2]) plt.show() # save the scatter plot to a file (optional) # plt.savefig('scatter_plot_sepal_length_width.png') # Extract petal length and petal width (the third and fourth features) petal_length = X[:, 2] petal_width = X[:, 3] # create a scatter plot plt.figure(figsize=(8, 6)) plt.scatter(petal_length, petal_width, c=y, cmap=plt.cm.Set1, edgecolor='k') plt.xlabel('Petal Length (cm)') plt.ylabel('Petal Width (cm)') plt.title('Scatter Plot for Petal Length and Petal Width') plt.colorbar(label='Iris Species', ticks=[0, 1, 2]) plt.show() # save the scatter plot to a file (optional) # plt.savefig('scatter_plot_petal_length_width.png')

要运行此脚本,您需要将其复制到 MetaEditor 中(见图 7)并单击“编译”。

图 7:MetaEditor 中的 IRIS.py 脚本

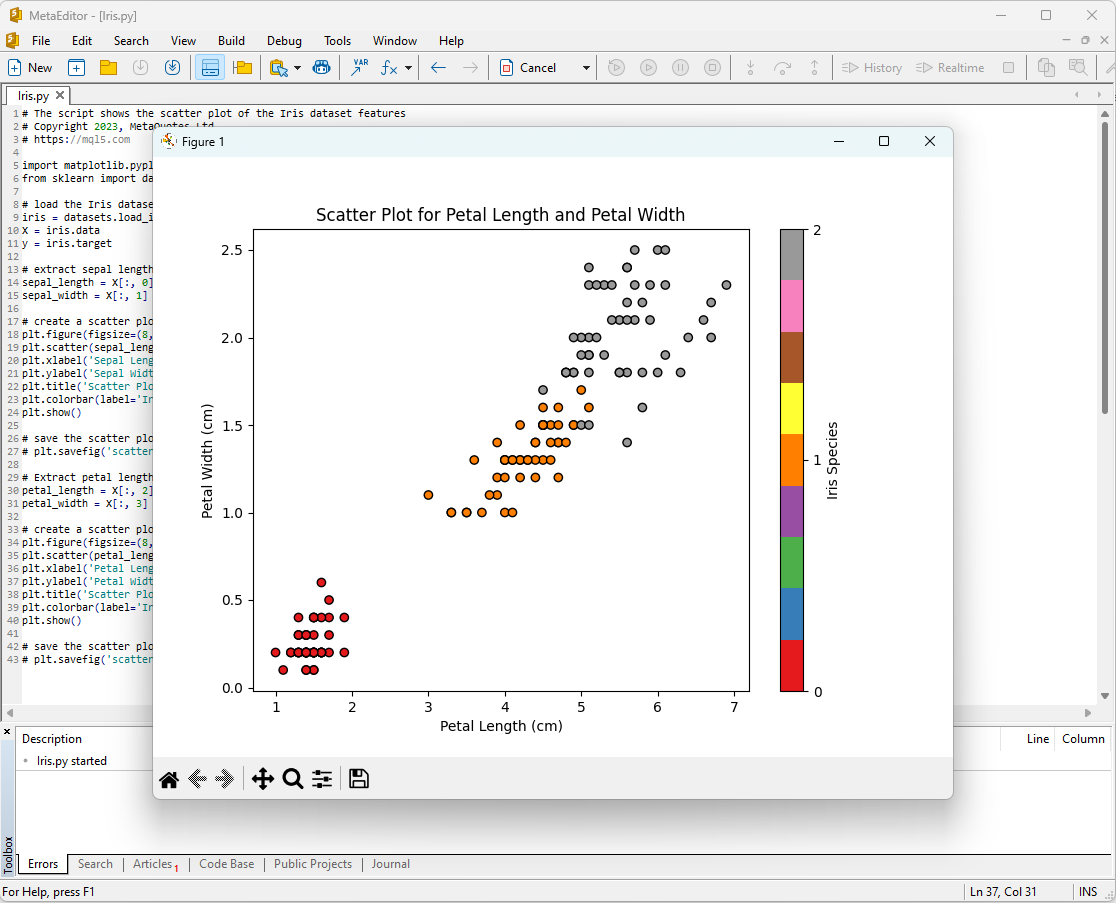

此后,图表将出现在屏幕上:

图 8:MetaEditor 中的 IRIS.py 脚本,带有花萼长度/花萼宽度图

图 9:MetaEditor 中的 IRIS.py 脚本,带有花瓣长度/花瓣宽度图

让我们仔细看看它们。

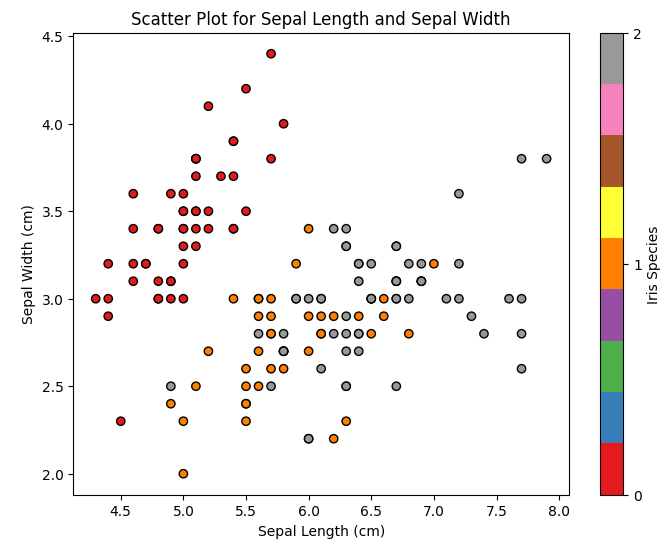

图 10:花萼长度与花萼宽度的散点图

在该图中,我们可以看到不同鸢尾花品种根据花萼长度和花萼宽度是如何分布的。我们可以观察到,与其他两个物种相比,山鸢尾的花萼通常更短更宽。

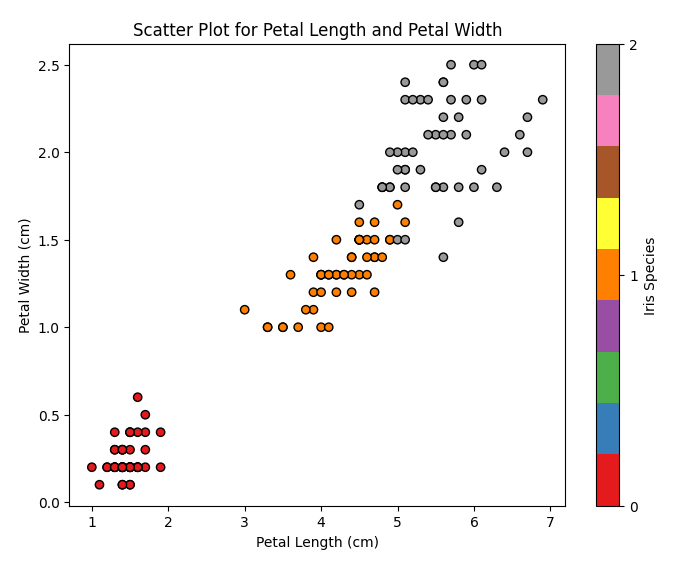

图 11:花瓣长度与花瓣宽度的散点图

在该图中,我们可以看到不同种类的鸢尾花是如何根据花瓣长度和花瓣宽度进行分布的。我们可以注意到,山鸢尾的花瓣最短且最窄,维吉尼亚鸢尾的花瓣最长且最宽,而杂色鸢尾则介于两者之间。

鸢尾花数据集是训练和测试机器学习模型的理想数据集,我们将用它来分析机器学习模型对于分类任务的有效性。

2.分类模型

分类是机器学习的基本任务之一,其目标是根据某些特征将数据分为不同的类别或种类。

让我们探索 scikit-learn 包中的主要机器学习模型。

Scikit-learn 分类器列表

要显示 scikit-learn 中可用的分类器列表,可以使用以下脚本:

# ScikitLearnClassifiers.py # The script lists all the classification algorithms available in scikit-learn # Copyright 2023, MetaQuotes Ltd. # https://mql5.com # print Python version from platform import python_version print("The Python version is ", python_version()) # print scikit-learn version import sklearn print('The scikit-learn version is {}.'.format(sklearn.__version__)) # print scikit-learn classifiers from sklearn.utils import all_estimators classifiers = all_estimators(type_filter='classifier') for index, (name, ClassifierClass) in enumerate(classifiers, start=1): print(f"Classifier {index}: {name}")

输出:

Python The scikit-learn version is 1.2.2.

Python Classifier 1:AdaBoostClassifier

Python Classifier 2:BaggingClassifier

Python Classifier 3:BernoulliNB

Python Classifier 4:CalibratedClassifierCV

Python Classifier 5:CategoricalNB

Python Classifier 6:ClassifierChain

Python Classifier 7:ComplementNB

Python Classifier 8:DecisionTreeClassifier

Python Classifier 9:DummyClassifier

Python Classifier 10:ExtraTreeClassifier

Python Classifier 11:ExtraTreesClassifier

Python Classifier 12:GaussianNB

Python Classifier 13:GaussianProcessClassifier

Python Classifier 14:GradientBoostingClassifier

Python Classifier 15:HistGradientBoostingClassifier

Python Classifier 16:KNeighborsClassifier

Python Classifier 17:LabelPropagation

Python Classifier 18:LabelSpreading

Python Classifier 19:LinearDiscriminantAnalysis

Python Classifier 20:LinearSVC

Python Classifier 21:LogisticRegression

Python Classifier 22:LogisticRegressionCV

Python Classifier 23:MLPClassifier

Python Classifier 24:MultiOutputClassifier

Python Classifier 25:MultinomialNB

Python Classifier 26:NearestCentroid

Python Classifier 27:NuSVC

Python Classifier 28:OneVsOneClassifier

Python Classifier 29:OneVsRestClassifier

Python Classifier 30:OutputCodeClassifier

Python Classifier 31:PassiveAggressiveClassifier

Python Classifier 32:Perceptron

Python Classifier 33:QuadraticDiscriminantAnalysis

Python Classifier 34:RadiusNeighborsClassifier

Python Classifier 35:RandomForestClassifier

Python Classifier 36:RidgeClassifier

Python Classifier 37:RidgeClassifierCV

Python Classifier 38:SGDClassifier

Python Classifier 39:SVC

Python Classifier 40:StackingClassifier

Python Classifier 41:VotingClassifier

为了方便起见,此分类器列表以不同的颜色突出显示。需要基础分类器的模型以黄色突出显示,而其他模型可以独立使用。

展望未来,值得注意的是,绿色模型已成功导出为 ONNX 格式,而红色模型在当前版本的 scikit-learn 1.2.2 中转换时遇到错误。

模型中输出数据的不同表示

需要注意的是,不同的模型对输出数据的表示不同,因此在使用转换为 ONNX 的模型时应该注意。

对于 Fisher 的鸢尾花分类任务,所有这些模型的输入张量都具有相同的格式:

1.Name: float_input, Data Type: tensor(float), Shape: [None, 4]

ONNX 模型的输出张量不同。

1.不需要后处理的模型:

- SVC Classifier;

- LinearSVC Classifier;

- NuSVC Classifier;

- Radius Neighbors Classifier;

- Ridge Classifier;

- Ridge Classifier CV.

1.Name: label, Data Type: tensor(int64), Shape: [None]

2.Name: probabilities, Data Type: tensor(float), Shape: [None, 3]

这些模型在第一个输出整数张量“label”中明确返回结果(类编号),而无需后期处理。

2.结果需要后处理的模型:

- Random Forest Classifier;

- Gradient Boosting Classifier;

- AdaBoost Classifier;

- Bagging Classifier;

- K-NN_Classifier;

- Decision Tree Classifier;

- Logistic Regression Classifier;

- Logistic Regression CV Classifier;

- Passive-Aggressive Classifier;

- Perceptron Classifier;

- SGD Classifier;

- Gaussian Naive Bayes Classifier;

- Multinomial Naive Bayes Classifier;

- Complement Naive Bayes Classifier;

- Bernoulli Naive Bayes Classifier;

- Multilayer Perceptron Classifier;

- Linear Discriminant Analysis Classifier;

- Hist Gradient Boosting Classifier;

- Categorical Naive Bayes Classifier;

- ExtraTree Classifier;

- ExtraTrees Classifier.

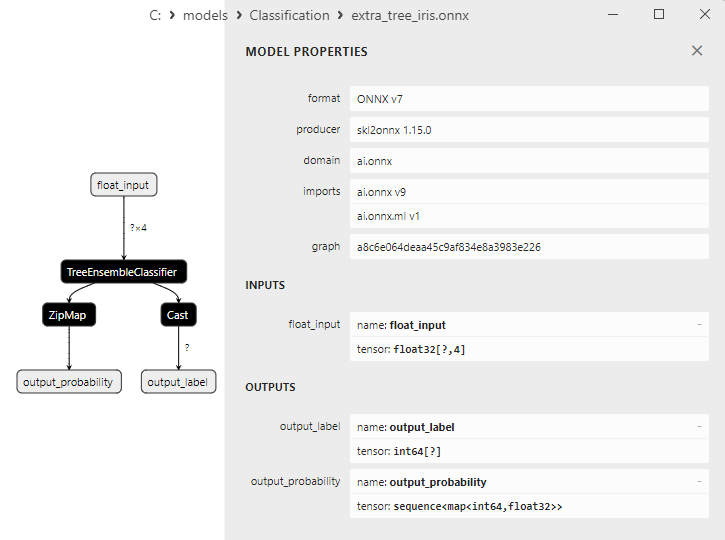

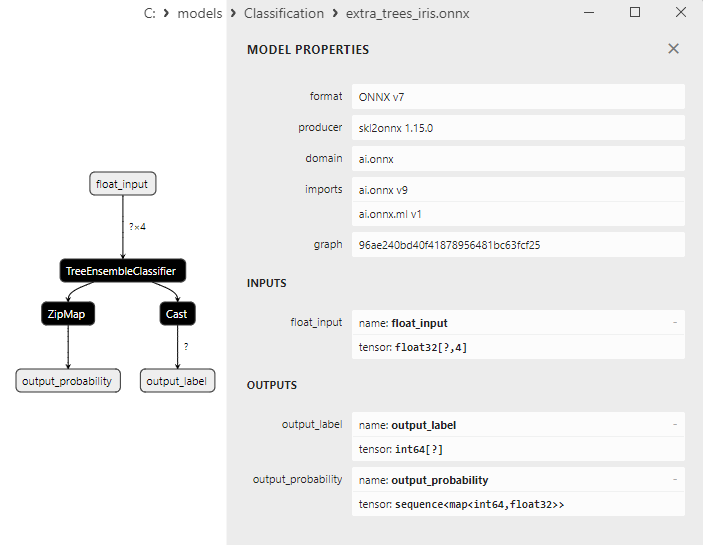

1.Name: output_label, Data Type: tensor(int64), Shape: [None]

2.Name: output_probability, Data Type: seq(map(int64,tensor(float))), Shape: []

这些模型返回类别列表以及属于每个类别的概率。

在这些情况下,为了获得结果,需要进行后处理,例如 seq(map(int64, tensor(float))(找到概率最高的元素)。

因此,在使用 ONNX 模型时必须注意并考虑这些方面。2.28.2中的脚本给出了不同结果处理的示例。

iris.mqh

为了在 MQL5 中对完整的鸢尾花数据集进行模型测试,需要进行数据准备。为此,将使用函数 PrepareIrisDataset()。

将这些函数移至 iris.mqh 文件会比较方便。

//+------------------------------------------------------------------+ //| Iris.mqh | //| Copyright 2023, MetaQuotes Ltd. | //| https://www.mql5.com | //+------------------------------------------------------------------+ #property copyright "Copyright 2023, MetaQuotes Ltd." #property link "https://www.mql5.com" //+------------------------------------------------------------------+ //| Structure for the IRIS Dataset sample | //+------------------------------------------------------------------+ struct sIRISsample { int sample_id; // sample id (1-150) double features[4]; // SepalLengthCm,SepalWidthCm,PetalLengthCm,PetalWidthCm string class_name; // class ("Iris-setosa","Iris-versicolor","Iris-virginica") int class_id; // class id (0,1,2), calculated by function IRISClassID }; //--- Iris dataset sIRISsample ExtIRISDataset[]; int Exttotal=0; //+------------------------------------------------------------------+ //| Returns class id by class name | //+------------------------------------------------------------------+ int IRISClassID(string class_name) { //--- if(class_name=="Iris-setosa") return(0); else if(class_name=="Iris-versicolor") return(1); else if(class_name=="Iris-virginica") return(2); //--- return(-1); } //+------------------------------------------------------------------+ //| AddSample | //+------------------------------------------------------------------+ bool AddSample(const int Id,const double SepalLengthCm,const double SepalWidthCm,const double PetalLengthCm,const double PetalWidthCm, const string Species) { //--- ExtIRISDataset[Exttotal].sample_id=Id; //--- ExtIRISDataset[Exttotal].features[0]=SepalLengthCm; ExtIRISDataset[Exttotal].features[1]=SepalWidthCm; ExtIRISDataset[Exttotal].features[2]=PetalLengthCm; ExtIRISDataset[Exttotal].features[3]=PetalWidthCm; //--- ExtIRISDataset[Exttotal].class_name=Species; ExtIRISDataset[Exttotal].class_id=IRISClassID(Species); //--- Exttotal++; //--- return(true); } //+------------------------------------------------------------------+ //| Prepare Iris Dataset | //+------------------------------------------------------------------+ bool PrepareIrisDataset(sIRISsample &iris_samples[]) { ArrayResize(ExtIRISDataset,150); Exttotal=0; //--- AddSample(1,5.1,3.5,1.4,0.2,"Iris-setosa"); AddSample(2,4.9,3.0,1.4,0.2,"Iris-setosa"); AddSample(3,4.7,3.2,1.3,0.2,"Iris-setosa"); AddSample(4,4.6,3.1,1.5,0.2,"Iris-setosa"); AddSample(5,5.0,3.6,1.4,0.2,"Iris-setosa"); AddSample(6,5.4,3.9,1.7,0.4,"Iris-setosa"); AddSample(7,4.6,3.4,1.4,0.3,"Iris-setosa"); AddSample(8,5.0,3.4,1.5,0.2,"Iris-setosa"); AddSample(9,4.4,2.9,1.4,0.2,"Iris-setosa"); AddSample(10,4.9,3.1,1.5,0.1,"Iris-setosa"); AddSample(11,5.4,3.7,1.5,0.2,"Iris-setosa"); AddSample(12,4.8,3.4,1.6,0.2,"Iris-setosa"); AddSample(13,4.8,3.0,1.4,0.1,"Iris-setosa"); AddSample(14,4.3,3.0,1.1,0.1,"Iris-setosa"); AddSample(15,5.8,4.0,1.2,0.2,"Iris-setosa"); AddSample(16,5.7,4.4,1.5,0.4,"Iris-setosa"); AddSample(17,5.4,3.9,1.3,0.4,"Iris-setosa"); AddSample(18,5.1,3.5,1.4,0.3,"Iris-setosa"); AddSample(19,5.7,3.8,1.7,0.3,"Iris-setosa"); AddSample(20,5.1,3.8,1.5,0.3,"Iris-setosa"); AddSample(21,5.4,3.4,1.7,0.2,"Iris-setosa"); AddSample(22,5.1,3.7,1.5,0.4,"Iris-setosa"); AddSample(23,4.6,3.6,1.0,0.2,"Iris-setosa"); AddSample(24,5.1,3.3,1.7,0.5,"Iris-setosa"); AddSample(25,4.8,3.4,1.9,0.2,"Iris-setosa"); AddSample(26,5.0,3.0,1.6,0.2,"Iris-setosa"); AddSample(27,5.0,3.4,1.6,0.4,"Iris-setosa"); AddSample(28,5.2,3.5,1.5,0.2,"Iris-setosa"); AddSample(29,5.2,3.4,1.4,0.2,"Iris-setosa"); AddSample(30,4.7,3.2,1.6,0.2,"Iris-setosa"); AddSample(31,4.8,3.1,1.6,0.2,"Iris-setosa"); AddSample(32,5.4,3.4,1.5,0.4,"Iris-setosa"); AddSample(33,5.2,4.1,1.5,0.1,"Iris-setosa"); AddSample(34,5.5,4.2,1.4,0.2,"Iris-setosa"); AddSample(35,4.9,3.1,1.5,0.2,"Iris-setosa"); AddSample(36,5.0,3.2,1.2,0.2,"Iris-setosa"); AddSample(37,5.5,3.5,1.3,0.2,"Iris-setosa"); AddSample(38,4.9,3.6,1.4,0.1,"Iris-setosa"); AddSample(39,4.4,3.0,1.3,0.2,"Iris-setosa"); AddSample(40,5.1,3.4,1.5,0.2,"Iris-setosa"); AddSample(41,5.0,3.5,1.3,0.3,"Iris-setosa"); AddSample(42,4.5,2.3,1.3,0.3,"Iris-setosa"); AddSample(43,4.4,3.2,1.3,0.2,"Iris-setosa"); AddSample(44,5.0,3.5,1.6,0.6,"Iris-setosa"); AddSample(45,5.1,3.8,1.9,0.4,"Iris-setosa"); AddSample(46,4.8,3.0,1.4,0.3,"Iris-setosa"); AddSample(47,5.1,3.8,1.6,0.2,"Iris-setosa"); AddSample(48,4.6,3.2,1.4,0.2,"Iris-setosa"); AddSample(49,5.3,3.7,1.5,0.2,"Iris-setosa"); AddSample(50,5.0,3.3,1.4,0.2,"Iris-setosa"); AddSample(51,7.0,3.2,4.7,1.4,"Iris-versicolor"); AddSample(52,6.4,3.2,4.5,1.5,"Iris-versicolor"); AddSample(53,6.9,3.1,4.9,1.5,"Iris-versicolor"); AddSample(54,5.5,2.3,4.0,1.3,"Iris-versicolor"); AddSample(55,6.5,2.8,4.6,1.5,"Iris-versicolor"); AddSample(56,5.7,2.8,4.5,1.3,"Iris-versicolor"); AddSample(57,6.3,3.3,4.7,1.6,"Iris-versicolor"); AddSample(58,4.9,2.4,3.3,1.0,"Iris-versicolor"); AddSample(59,6.6,2.9,4.6,1.3,"Iris-versicolor"); AddSample(60,5.2,2.7,3.9,1.4,"Iris-versicolor"); AddSample(61,5.0,2.0,3.5,1.0,"Iris-versicolor"); AddSample(62,5.9,3.0,4.2,1.5,"Iris-versicolor"); AddSample(63,6.0,2.2,4.0,1.0,"Iris-versicolor"); AddSample(64,6.1,2.9,4.7,1.4,"Iris-versicolor"); AddSample(65,5.6,2.9,3.6,1.3,"Iris-versicolor"); AddSample(66,6.7,3.1,4.4,1.4,"Iris-versicolor"); AddSample(67,5.6,3.0,4.5,1.5,"Iris-versicolor"); AddSample(68,5.8,2.7,4.1,1.0,"Iris-versicolor"); AddSample(69,6.2,2.2,4.5,1.5,"Iris-versicolor"); AddSample(70,5.6,2.5,3.9,1.1,"Iris-versicolor"); AddSample(71,5.9,3.2,4.8,1.8,"Iris-versicolor"); AddSample(72,6.1,2.8,4.0,1.3,"Iris-versicolor"); AddSample(73,6.3,2.5,4.9,1.5,"Iris-versicolor"); AddSample(74,6.1,2.8,4.7,1.2,"Iris-versicolor"); AddSample(75,6.4,2.9,4.3,1.3,"Iris-versicolor"); AddSample(76,6.6,3.0,4.4,1.4,"Iris-versicolor"); AddSample(77,6.8,2.8,4.8,1.4,"Iris-versicolor"); AddSample(78,6.7,3.0,5.0,1.7,"Iris-versicolor"); AddSample(79,6.0,2.9,4.5,1.5,"Iris-versicolor"); AddSample(80,5.7,2.6,3.5,1.0,"Iris-versicolor"); AddSample(81,5.5,2.4,3.8,1.1,"Iris-versicolor"); AddSample(82,5.5,2.4,3.7,1.0,"Iris-versicolor"); AddSample(83,5.8,2.7,3.9,1.2,"Iris-versicolor"); AddSample(84,6.0,2.7,5.1,1.6,"Iris-versicolor"); AddSample(85,5.4,3.0,4.5,1.5,"Iris-versicolor"); AddSample(86,6.0,3.4,4.5,1.6,"Iris-versicolor"); AddSample(87,6.7,3.1,4.7,1.5,"Iris-versicolor"); AddSample(88,6.3,2.3,4.4,1.3,"Iris-versicolor"); AddSample(89,5.6,3.0,4.1,1.3,"Iris-versicolor"); AddSample(90,5.5,2.5,4.0,1.3,"Iris-versicolor"); AddSample(91,5.5,2.6,4.4,1.2,"Iris-versicolor"); AddSample(92,6.1,3.0,4.6,1.4,"Iris-versicolor"); AddSample(93,5.8,2.6,4.0,1.2,"Iris-versicolor"); AddSample(94,5.0,2.3,3.3,1.0,"Iris-versicolor"); AddSample(95,5.6,2.7,4.2,1.3,"Iris-versicolor"); AddSample(96,5.7,3.0,4.2,1.2,"Iris-versicolor"); AddSample(97,5.7,2.9,4.2,1.3,"Iris-versicolor"); AddSample(98,6.2,2.9,4.3,1.3,"Iris-versicolor"); AddSample(99,5.1,2.5,3.0,1.1,"Iris-versicolor"); AddSample(100,5.7,2.8,4.1,1.3,"Iris-versicolor"); AddSample(101,6.3,3.3,6.0,2.5,"Iris-virginica"); AddSample(102,5.8,2.7,5.1,1.9,"Iris-virginica"); AddSample(103,7.1,3.0,5.9,2.1,"Iris-virginica"); AddSample(104,6.3,2.9,5.6,1.8,"Iris-virginica"); AddSample(105,6.5,3.0,5.8,2.2,"Iris-virginica"); AddSample(106,7.6,3.0,6.6,2.1,"Iris-virginica"); AddSample(107,4.9,2.5,4.5,1.7,"Iris-virginica"); AddSample(108,7.3,2.9,6.3,1.8,"Iris-virginica"); AddSample(109,6.7,2.5,5.8,1.8,"Iris-virginica"); AddSample(110,7.2,3.6,6.1,2.5,"Iris-virginica"); AddSample(111,6.5,3.2,5.1,2.0,"Iris-virginica"); AddSample(112,6.4,2.7,5.3,1.9,"Iris-virginica"); AddSample(113,6.8,3.0,5.5,2.1,"Iris-virginica"); AddSample(114,5.7,2.5,5.0,2.0,"Iris-virginica"); AddSample(115,5.8,2.8,5.1,2.4,"Iris-virginica"); AddSample(116,6.4,3.2,5.3,2.3,"Iris-virginica"); AddSample(117,6.5,3.0,5.5,1.8,"Iris-virginica"); AddSample(118,7.7,3.8,6.7,2.2,"Iris-virginica"); AddSample(119,7.7,2.6,6.9,2.3,"Iris-virginica"); AddSample(120,6.0,2.2,5.0,1.5,"Iris-virginica"); AddSample(121,6.9,3.2,5.7,2.3,"Iris-virginica"); AddSample(122,5.6,2.8,4.9,2.0,"Iris-virginica"); AddSample(123,7.7,2.8,6.7,2.0,"Iris-virginica"); AddSample(124,6.3,2.7,4.9,1.8,"Iris-virginica"); AddSample(125,6.7,3.3,5.7,2.1,"Iris-virginica"); AddSample(126,7.2,3.2,6.0,1.8,"Iris-virginica"); AddSample(127,6.2,2.8,4.8,1.8,"Iris-virginica"); AddSample(128,6.1,3.0,4.9,1.8,"Iris-virginica"); AddSample(129,6.4,2.8,5.6,2.1,"Iris-virginica"); AddSample(130,7.2,3.0,5.8,1.6,"Iris-virginica"); AddSample(131,7.4,2.8,6.1,1.9,"Iris-virginica"); AddSample(132,7.9,3.8,6.4,2.0,"Iris-virginica"); AddSample(133,6.4,2.8,5.6,2.2,"Iris-virginica"); AddSample(134,6.3,2.8,5.1,1.5,"Iris-virginica"); AddSample(135,6.1,2.6,5.6,1.4,"Iris-virginica"); AddSample(136,7.7,3.0,6.1,2.3,"Iris-virginica"); AddSample(137,6.3,3.4,5.6,2.4,"Iris-virginica"); AddSample(138,6.4,3.1,5.5,1.8,"Iris-virginica"); AddSample(139,6.0,3.0,4.8,1.8,"Iris-virginica"); AddSample(140,6.9,3.1,5.4,2.1,"Iris-virginica"); AddSample(141,6.7,3.1,5.6,2.4,"Iris-virginica"); AddSample(142,6.9,3.1,5.1,2.3,"Iris-virginica"); AddSample(143,5.8,2.7,5.1,1.9,"Iris-virginica"); AddSample(144,6.8,3.2,5.9,2.3,"Iris-virginica"); AddSample(145,6.7,3.3,5.7,2.5,"Iris-virginica"); AddSample(146,6.7,3.0,5.2,2.3,"Iris-virginica"); AddSample(147,6.3,2.5,5.0,1.9,"Iris-virginica"); AddSample(148,6.5,3.0,5.2,2.0,"Iris-virginica"); AddSample(149,6.2,3.4,5.4,2.3,"Iris-virginica"); AddSample(150,5.9,3.0,5.1,1.8,"Iris-virginica"); //--- ArrayResize(iris_samples,150); for(int i=0; i<Exttotal; i++) { iris_samples[i]=ExtIRISDataset[i]; } //--- return(true); } //+------------------------------------------------------------------+

让我们比较一下三种流行的分类方法:SVC(Support Vector Classification,支持向量分类)、LinearSVC(Linear Support Vector Classification,线性支持向量分类)和 NuSVC(Nu Support Vector Classification,核支持向量分类)。

操作原理:

SVC(Support Vector Classification)

工作准则:SVC 是一种基于最大化类别间边界的分类方法。它寻求一个最佳分离超平面,最大限度地分离类别并支持支持向量(最接近超平面的点)。

核函数:SVC 可以使用各种核函数,例如线性、径向基函数 (RBF)、多项式等。核函数决定如何转换数据以找到最佳超平面。

LinearSVC (Linear Support Vector Classification)

工作准则:LinearSVC 是 SVC 的一个变体,专门用于线性分类。它寻求一个不使用核函数的最佳线性分离超平面。这使得处理大量数据时更快、更高效。

NuSVC (Nu Support Vector Classification)

工作准则:NuSVC 也基于支持向量方法,但引入了一个参数 Nu (nu),它控制模型的复杂性和支持向量的比例。Nu 值在 0 到 1 之间,决定了多少数据可用于支持向量和误差。

优点:

SVC

强大的算法:由于使用了核函数,SVC 可以处理复杂的分类任务并处理非线性数据。

对异常值的鲁棒性:SVC 对数据异常值具有很强的鲁棒性,因为它使用支持向量来构建分离超平面。

LinearSVC

高效率:LinearSVC 在处理大型数据集时更快、更高效,尤其是当数据量很大且线性分离适合该任务时。

线性分类:如果问题是线性可分的,LinearSVC 可以产生良好的结果,而不需要复杂的核函数。

NuSVC

模型复杂度控制:NuSVC 中的 Nu 参数允许您控制模型的复杂性以及拟合数据和泛化之间的权衡。

对异常值的鲁棒性:与 SVC 类似,NuSVC 对异常值具有很强的鲁棒性,这使得它对于处理噪声数据的任务非常有用。

限制:

SVC

计算复杂性:当处理大型数据集和/或使用复杂的核函数时,SVC 可能会很慢。

核敏感度:选择正确的核函数可能是一项具有挑战性的任务,并且会显著影响模型性能。

LinearSVC

线性约束:LinearSVC 受到线性数据分离的限制,在特征和目标变量之间存在非线性依赖关系的情况下表现不佳。

NuSVC

Nu 参数调整:调整 Nu 参数可能需要时间和实验才能获得最佳结果。

根据任务特点和数据量,每一种方法都可以是最佳选择。进行实验并选择最适合特定分类任务要求的方法非常重要。

2.1.SVC Classifier

Support Vector Classification(SVC,支持向量分类)分类方法是一种强大的机器学习算法,广泛用于解决分类任务。

操作原理:

- 最优分离超平面

工作准则:SVC 背后的主要思想是在特征空间中找到最佳分离超平面。该超平面应该最大化不同类别的对象之间的分离并且支持支持向量,这些支持向量是最接近超平面的数据点。

最大化间隔:SVC 的目标是最大化类之间的间隔,也就是支持向量到超平面的距离。这使得该方法对异常值具有鲁棒性,并能很好地推广到新数据。 - 核函数的使用

核函数:SVC 可以使用各种核函数,例如线性、径向基函数 (RBF)、多项式等。核函数允许将数据投影到更高维的空间中,即使在原始数据空间中没有线性可分性,任务也变得线性化。

核函数的选择:选择正确的核函数会显著影响 SVC 模型的性能。线性超平面并不总是最佳解决方案。

优点:

- 强大的算法。处理复杂任务:SVC 可以解决复杂的分类任务,包括特征和目标变量之间具有非线性依赖关系的任务。

- 对异常值的鲁棒性:支持向量的使用使得该方法对于数据异常值具有鲁棒性。它依赖于支持向量而不是整个数据集。

- 核函数的灵活性。数据适应性:使用不同核函数的能力使 SVC 能够适应特定数据并发现非线性关系。

- 很好的泛化能力。推广至新数据:SVC 模型可以很好地推广到新数据,使其可用于预测任务。

限制:

- 计算复杂性。训练时间:SVC 训练速度可能较慢,尤其是在处理大量数据或复杂核函数时。

- 核函数的选择。选择正确的核函数:选择正确的核函数可能需要实验,并且取决于数据特性。

- 对特征缩放的敏感性。数据规范化:SVC 对特征缩放很敏感,因此建议在训练之前对数据进行规范化或标准化。

- 模型可解释性。解释复杂性:由于使用非线性核函数和大量支持向量,SVC 模型的解释可能很复杂。

根据具体任务和数据量,SVC 方法可以成为解决分类任务的有力工具。然而,必须考虑其局限性并调整参数以获得最佳结果。

2.1.1.创建 SVC Classifier 模型的代码

此代码演示了在鸢尾花数据集上训练 SVC Classifier 模型、将其导出为 ONNX 格式以及使用 ONNX 模型执行分类的过程。它还评估了原始模型和 ONNX 模型的准确性。

# Iris_SVCClassifier.py # The code demonstrates the process of training SVC model on the Iris dataset, exporting it to ONNX format, and making predictions using the ONNX model. # It also evaluates the accuracy of both the original model and the ONNX model. # Copyright 2023, MetaQuotes Ltd. # https://www.mql5.com # import necessary libraries from sklearn import datasets from sklearn.svm import SVC from sklearn.metrics import accuracy_score, classification_report from skl2onnx import convert_sklearn from skl2onnx.common.data_types import FloatTensorType import onnxruntime as ort import numpy as np from sys import argv # define the path for saving the model data_path = argv[0] last_index = data_path.rfind("\\") + 1 data_path = data_path[0:last_index] # load the Iris dataset iris = datasets.load_iris() X = iris.data y = iris.target # create an SVC Classifier model with a linear kernel svc_model = SVC(kernel='linear', C=1.0) # train the model on the entire dataset svc_model.fit(X, y) # predict classes for the entire dataset y_pred = svc_model.predict(X) # evaluate the model's accuracy accuracy = accuracy_score(y, y_pred) print("Accuracy of SVC Classifier model:", accuracy) # display the classification report print("\nClassification Report:\n", classification_report(y, y_pred)) # define the input data type initial_type = [('float_input', FloatTensorType([None, X.shape[1]]))] # export the model to ONNX format with float data type onnx_model = convert_sklearn(svc_model, initial_types=initial_type, target_opset=12) # save the model to a file onnx_filename = data_path +"svc_iris.onnx" with open(onnx_filename, "wb") as f: f.write(onnx_model.SerializeToString()) # print model path print(f"Model saved to {onnx_filename}") # load the ONNX model and make predictions onnx_session = ort.InferenceSession(onnx_filename) input_name = onnx_session.get_inputs()[0].name output_name = onnx_session.get_outputs()[0].name # display information about input tensors in ONNX print("\nInformation about input tensors in ONNX:") for i, input_tensor in enumerate(onnx_session.get_inputs()): print(f"{i + 1}. Name: {input_tensor.name}, Data Type: {input_tensor.type}, Shape: {input_tensor.shape}") # display information about output tensors in ONNX print("\nInformation about output tensors in ONNX:") for i, output_tensor in enumerate(onnx_session.get_outputs()): print(f"{i + 1}. Name: {output_tensor.name}, Data Type: {output_tensor.type}, Shape: {output_tensor.shape}") # convert data to floating-point format (float32) X_float32 = X.astype(np.float32) # predict classes for the entire dataset using ONNX y_pred_onnx = onnx_session.run([output_name], {input_name: X_float32})[0] # evaluate the accuracy of the ONNX model accuracy_onnx = accuracy_score(y, y_pred_onnx) print("\nAccuracy of SVC Classifier model in ONNX format:", accuracy_onnx)

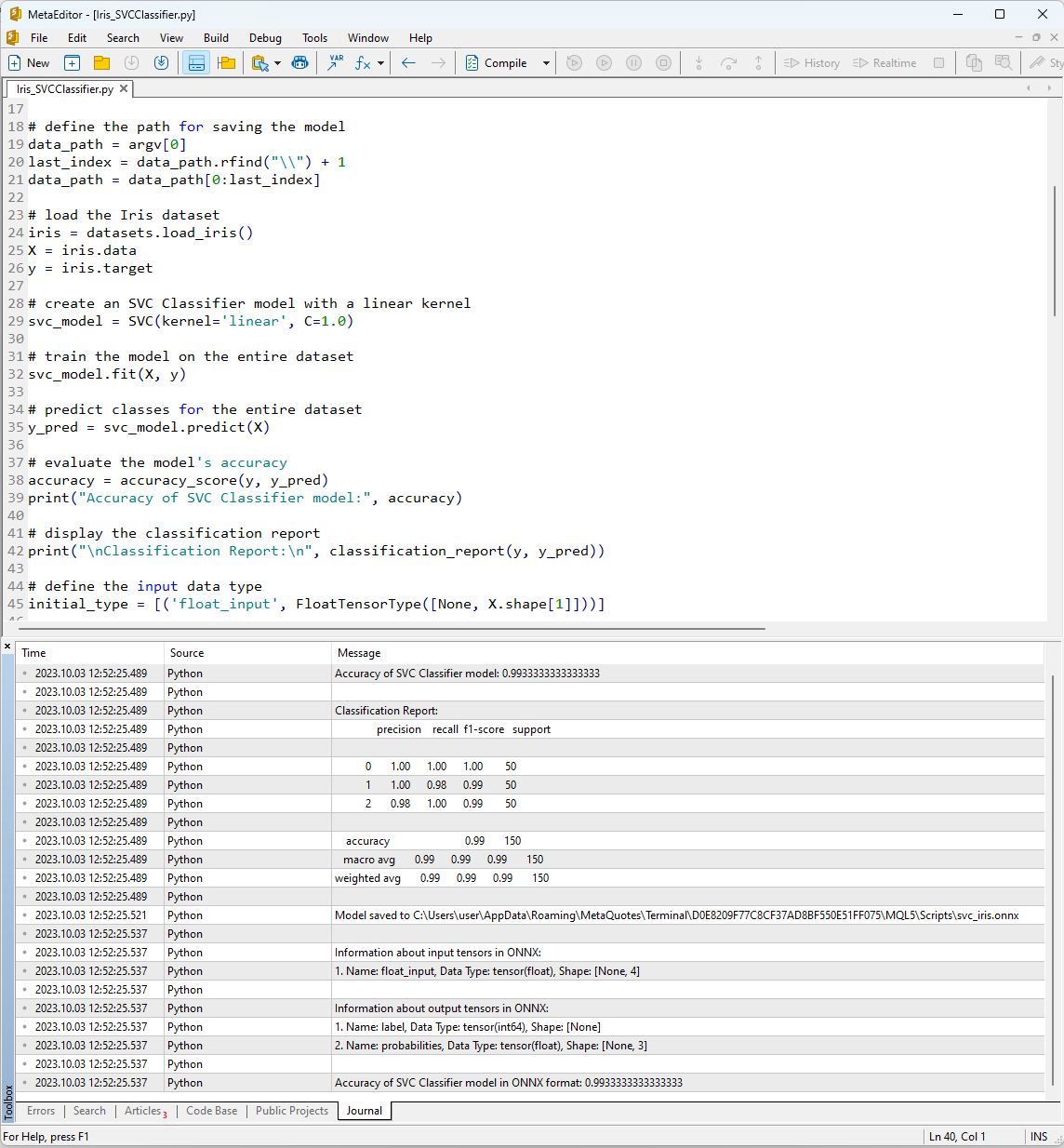

使用“编译”按钮在 MetaEditor 中运行脚本后,您可以在日志选项卡中查看其执行结果。

图 12.MetaEditor 中的 Iris_SVMClassifier.py 脚本的结果

Iris_SVCClassifier.py 脚本的输出:

Python Accuracy of SVC Classifier model:0.9933333333333333

Python

Python Classification Report:

Python precision recall f1-score support

Python

Python 0 1.00 1.00 1.00 50

Python 1 1.00 0.98 0.99 50

Python 2 0.98 1.00 0.99 50

Python

Python accuracy 0.99 150

Python macro avg 0.99 0.99 0.99 150

Python weighted avg 0.99 0.99 0.99 150

Python

Python Model saved to C:\Users\user\AppData\Roaming\MetaQuotes\Terminal\D0E8209F77C8CF37AD8BF550E51FF075\MQL5\Scripts\svc_iris.onnx

Python

Python Information about input tensors in ONNX:

Python 1.Name: float_input, Data Type: tensor(float), Shape: [None, 4]

Python

Python Information about output tensors in ONNX:

Python 1.Name: label, Data Type: tensor(int64), Shape: [None]

Python 2.Name: probabilities, Data Type: tensor(float), Shape: [None, 3]

Python

Python Accuracy of SVC Classifier model in ONNX format:0.9933333333333333

在这里,您可以找到有关 ONNX 模型保存的路径、ONNX 模型的输入和输出参数的类型以及描述鸢尾花数据集的准确性的信息。

使用 SVM 分类器描述数据集的准确率为 99%,导出为 ONNX 格式的模型也显示出相同的准确率。

现在,我们将通过对 150 个数据样本中的每一个运行构建的模型来在 MQL5 中验证这些结果。此外,该脚本还包括批量数据处理的示例。

2.1.2.使用 SVC Classifier 模型的 MQL5 代码

//+------------------------------------------------------------------+ //| Iris_SVCClassifier.mq5 | //| Copyright 2023, MetaQuotes Ltd. | //| https://www.mql5.com | //+------------------------------------------------------------------+ #property copyright "Copyright 2023, MetaQuotes Ltd." #property link "https://www.mql5.com" #property version "1.00" #include "iris.mqh" #resource "svc_iris.onnx" as const uchar ExtModel[]; //+------------------------------------------------------------------+ //| Test IRIS dataset samples | //+------------------------------------------------------------------+ bool TestSamples(long model,float &input_data[][4], int &model_classes_id[]) { //--- check number of input samples ulong batch_size=input_data.Range(0); if(batch_size==0) return(false); //--- prepare output array ArrayResize(model_classes_id,(int)batch_size); //--- ulong input_shape[]= { batch_size, input_data.Range(1)}; OnnxSetInputShape(model,0,input_shape); //--- int output1[]; float output2[][3]; //--- ArrayResize(output1,(int)batch_size); ArrayResize(output2,(int)batch_size); //--- ulong output_shape[]= {batch_size}; OnnxSetOutputShape(model,0,output_shape); //--- ulong output_shape2[]= {batch_size,3}; OnnxSetOutputShape(model,1,output_shape2); //--- bool res=OnnxRun(model,ONNX_DEBUG_LOGS,input_data,output1,output2); //--- classes are ready in output1[k]; if(res) { for(int k=0; k<(int)batch_size; k++) model_classes_id[k]=output1[k]; } //--- return(res); } //+------------------------------------------------------------------+ //| Test all samples from IRIS dataset (150) | //| Here we test all samples with batch=1, sample by sample | //+------------------------------------------------------------------+ bool TestAllIrisDataset(const long model,const string model_name,double &model_accuracy) { sIRISsample iris_samples[]; //--- load dataset from file PrepareIrisDataset(iris_samples); //--- test int total_samples=ArraySize(iris_samples); if(total_samples==0) { Print("iris dataset not prepared"); return(false); } //--- show dataset for(int k=0; k<total_samples; k++) { //PrintFormat("%d (%.2f,%.2f,%.2f,%.2f) class %d (%s)",iris_samples[k].sample_id,iris_samples[k].features[0],iris_samples[k].features[1],iris_samples[k].features[2],iris_samples[k].features[3],iris_samples[k].class_id,iris_samples[k].class_name); } //--- array for output classes int model_output_classes_id[]; //--- check all Iris dataset samples int correct_results=0; for(int k=0; k<total_samples; k++) { //--- input array float iris_sample_input_data[1][4]; //--- prepare input data from kth iris sample dataset iris_sample_input_data[0][0]=(float)iris_samples[k].features[0]; iris_sample_input_data[0][1]=(float)iris_samples[k].features[1]; iris_sample_input_data[0][2]=(float)iris_samples[k].features[2]; iris_sample_input_data[0][3]=(float)iris_samples[k].features[3]; //--- run model bool res=TestSamples(model,iris_sample_input_data,model_output_classes_id); //--- check result if(res) { if(model_output_classes_id[0]==iris_samples[k].class_id) { correct_results++; } else { PrintFormat("model:%s sample=%d FAILED [class=%d, true class=%d] features=(%.2f,%.2f,%.2f,%.2f]",model_name,iris_samples[k].sample_id,model_output_classes_id[0],iris_samples[k].class_id,iris_samples[k].features[0],iris_samples[k].features[1],iris_samples[k].features[2],iris_samples[k].features[3]); } } } model_accuracy=1.0*correct_results/total_samples; //--- PrintFormat("model:%s correct results: %.2f%%",model_name,100*model_accuracy); //--- return(true); } //+------------------------------------------------------------------+ //| Here we test batch execution of the model | //+------------------------------------------------------------------+ bool TestBatchExecution(const long model,const string model_name,double &model_accuracy) { model_accuracy=0; //--- array for output classes int model_output_classes_id[]; int correct_results=0; int total_results=0; bool res=false; //--- run batch with 3 samples float input_data_batch3[3][4]= { {5.1f,3.5f,1.4f,0.2f}, // iris dataset sample id=1, Iris-setosa {6.3f,2.5f,4.9f,1.5f}, // iris dataset sample id=73, Iris-versicolor {6.3f,2.7f,4.9f,1.8f} // iris dataset sample id=124, Iris-virginica }; int correct_classes_batch3[3]= {0,1,2}; //--- run model res=TestSamples(model,input_data_batch3,model_output_classes_id); if(res) { //--- check result for(int j=0; j<ArraySize(model_output_classes_id); j++) { //--- check result if(model_output_classes_id[j]==correct_classes_batch3[j]) correct_results++; else { PrintFormat("model:%s FAILED [class=%d, true class=%d] features=(%.2f,%.2f,%.2f,%.2f)",model_name,model_output_classes_id[j],correct_classes_batch3[j],input_data_batch3[j][0],input_data_batch3[j][1],input_data_batch3[j][2],input_data_batch3[j][3]); } total_results++; } } else return(false); //--- run batch with 10 samples float input_data_batch10[10][4]= { {5.5f,3.5f,1.3f,0.2f}, // iris dataset sample id=37 (Iris-setosa) {4.9f,3.1f,1.5f,0.1f}, // iris dataset sample id=38 (Iris-setosa) {4.4f,3.0f,1.3f,0.2f}, // iris dataset sample id=39 (Iris-setosa) {5.0f,3.3f,1.4f,0.2f}, // iris dataset sample id=50 (Iris-setosa) {7.0f,3.2f,4.7f,1.4f}, // iris dataset sample id=51 (Iris-versicolor) {6.4f,3.2f,4.5f,1.5f}, // iris dataset sample id=52 (Iris-versicolor) {6.3f,3.3f,6.0f,2.5f}, // iris dataset sample id=101 (Iris-virginica) {5.8f,2.7f,5.1f,1.9f}, // iris dataset sample id=102 (Iris-virginica) {7.1f,3.0f,5.9f,2.1f}, // iris dataset sample id=103 (Iris-virginica) {6.3f,2.9f,5.6f,1.8f} // iris dataset sample id=104 (Iris-virginica) }; //--- correct classes for all 10 samples in the batch int correct_classes_batch10[10]= {0,0,0,0,1,1,2,2,2,2}; //--- run model res=TestSamples(model,input_data_batch10,model_output_classes_id); //--- check result if(res) { for(int j=0; j<ArraySize(model_output_classes_id); j++) { if(model_output_classes_id[j]==correct_classes_batch10[j]) correct_results++; else { double f1=input_data_batch10[j][0]; double f2=input_data_batch10[j][1]; double f3=input_data_batch10[j][2]; double f4=input_data_batch10[j][3]; PrintFormat("model:%s FAILED [class=%d, true class=%d] features=(%.2f,%.2f,%.2f,%.2f)",model_name,model_output_classes_id[j],correct_classes_batch10[j],input_data_batch10[j][0],input_data_batch10[j][1],input_data_batch10[j][2],input_data_batch10[j][3]); } total_results++; } } else return(false); //--- calculate accuracy model_accuracy=correct_results/total_results; //--- return(res); } //+------------------------------------------------------------------+ //| Script program start function | //+------------------------------------------------------------------+ int OnStart(void) { string model_name="SVCClassifier"; //--- long model=OnnxCreateFromBuffer(ExtModel,ONNX_DEFAULT); if(model==INVALID_HANDLE) { PrintFormat("model_name=%s OnnxCreate error %d for",model_name,GetLastError()); } else { //--- test all dataset double model_accuracy=0; //-- test sample by sample execution for all Iris dataset if(TestAllIrisDataset(model,model_name,model_accuracy)) PrintFormat("model=%s all samples accuracy=%f",model_name,model_accuracy); else PrintFormat("error in testing model=%s ",model_name); //--- test batch execution for several samples if(TestBatchExecution(model,model_name,model_accuracy)) PrintFormat("model=%s batch test accuracy=%f",model_name,model_accuracy); else PrintFormat("error in testing model=%s ",model_name); //--- release model OnnxRelease(model); } return(0); } //+------------------------------------------------------------------+

脚本执行的结果显示在 MetaTrader 5 终端的“专家”选项卡中。

Iris_SVCClassifier (EURUSD,H1) model:SVCClassifier sample=84 FAILED [class=2, true class=1] features=(6.00,2.70,5.10,1.60] Iris_SVCClassifier (EURUSD,H1) model:SVCClassifier correct results: 99.33% Iris_SVCClassifier (EURUSD,H1) model=SVCClassifier all samples accuracy=0.993333 Iris_SVCClassifier (EURUSD,H1) model=SVCClassifier batch test accuracy=1.000000

SVC 模型对 150 个样本中的 149 个进行了正确分类,这是一个非常好的结果。该模型在鸢尾花数据集中仅出现一次分类错误,将样本 #84 预测为第 2 类(versicolor),而不是第 1 类(virginica)。

值得注意的是,导出的 ONNX 模型在完整鸢尾花数据集上的准确率为 99.33%,与原始模型的准确率相匹配。

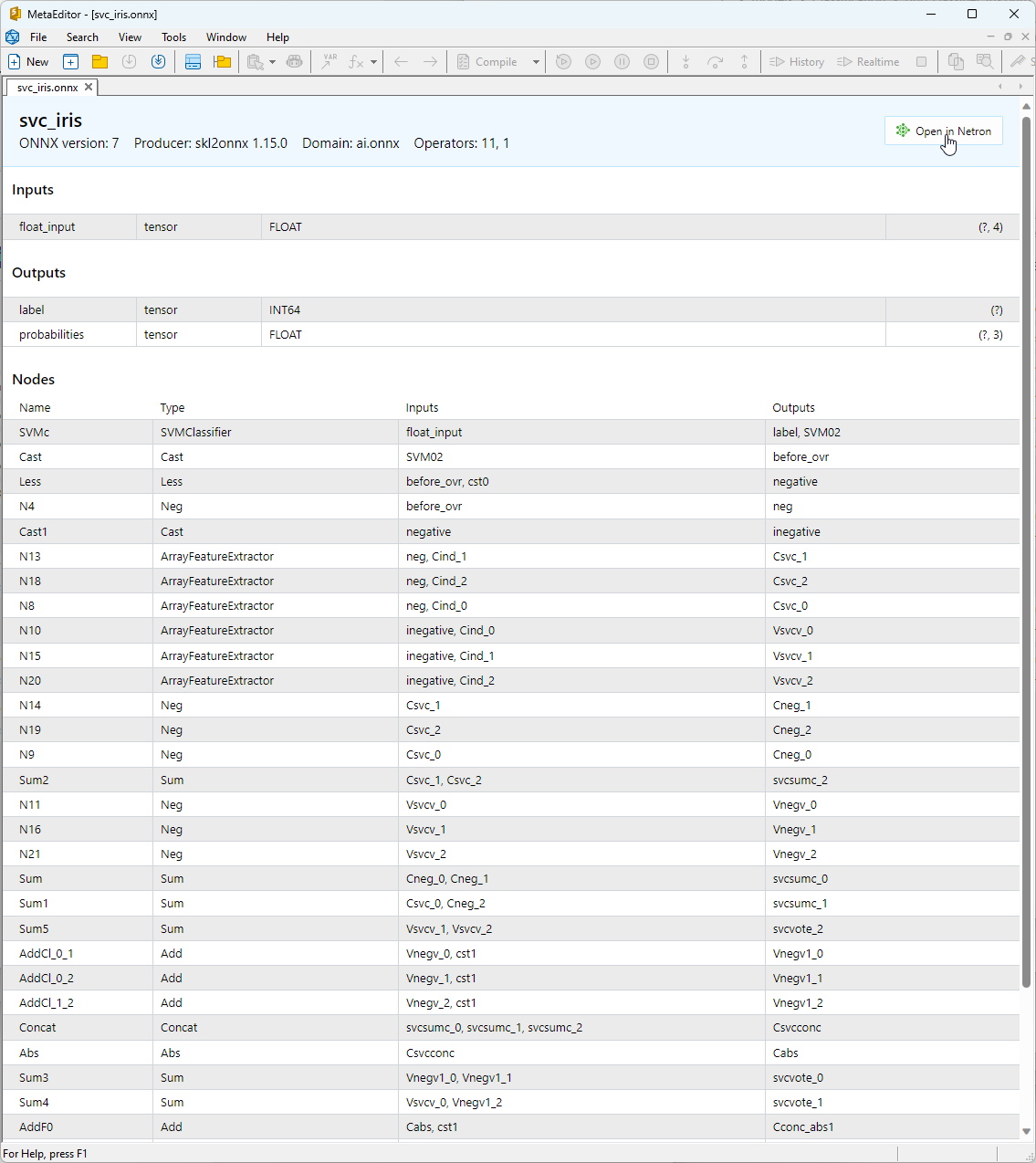

2.1.3.SVC Classifier 模型的 ONNX 表示

您可以在 MetaEditor 中查看构建的 ONNX 模型。

图 13.MetaEditor 中的 ONNX 模型 svc_iris.onnx

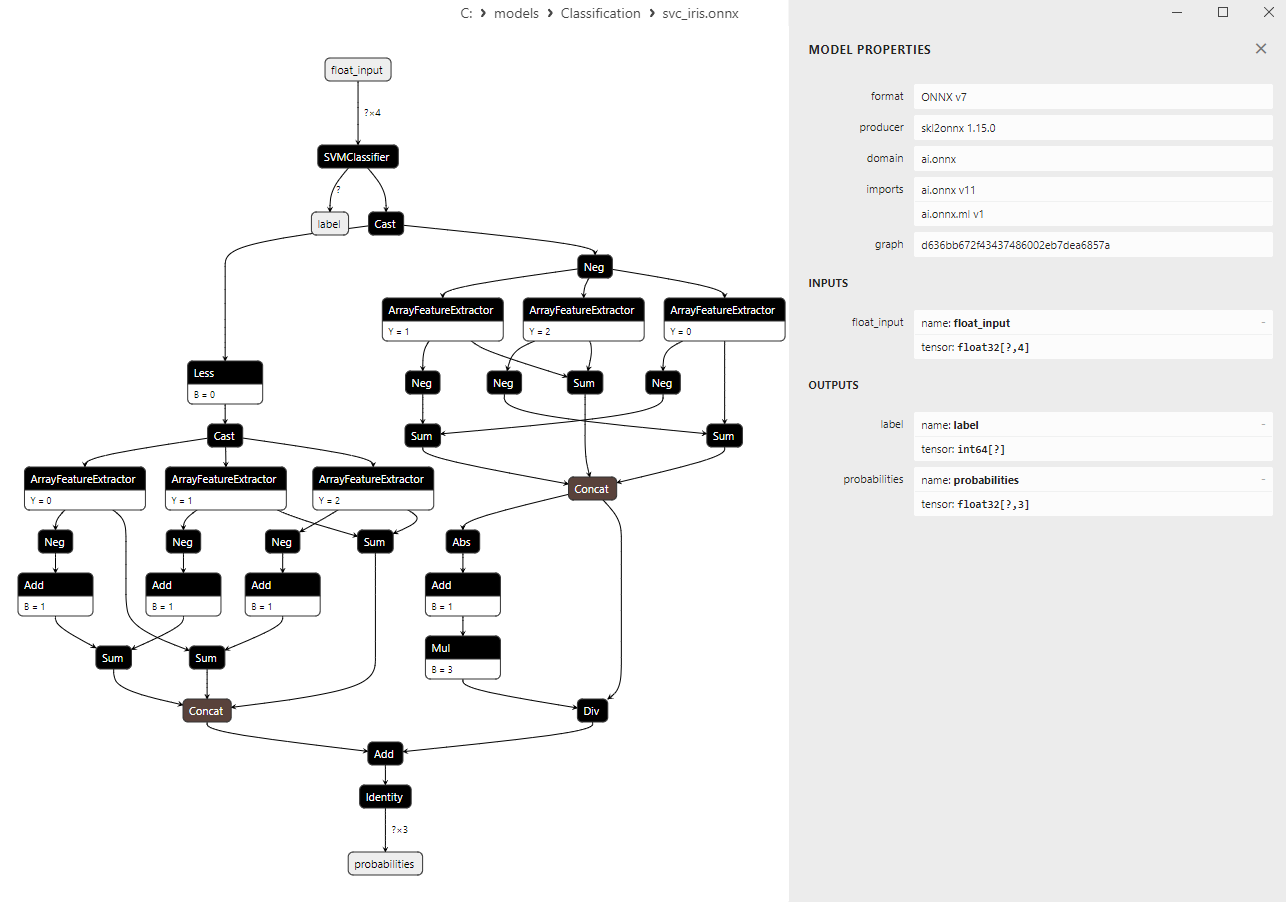

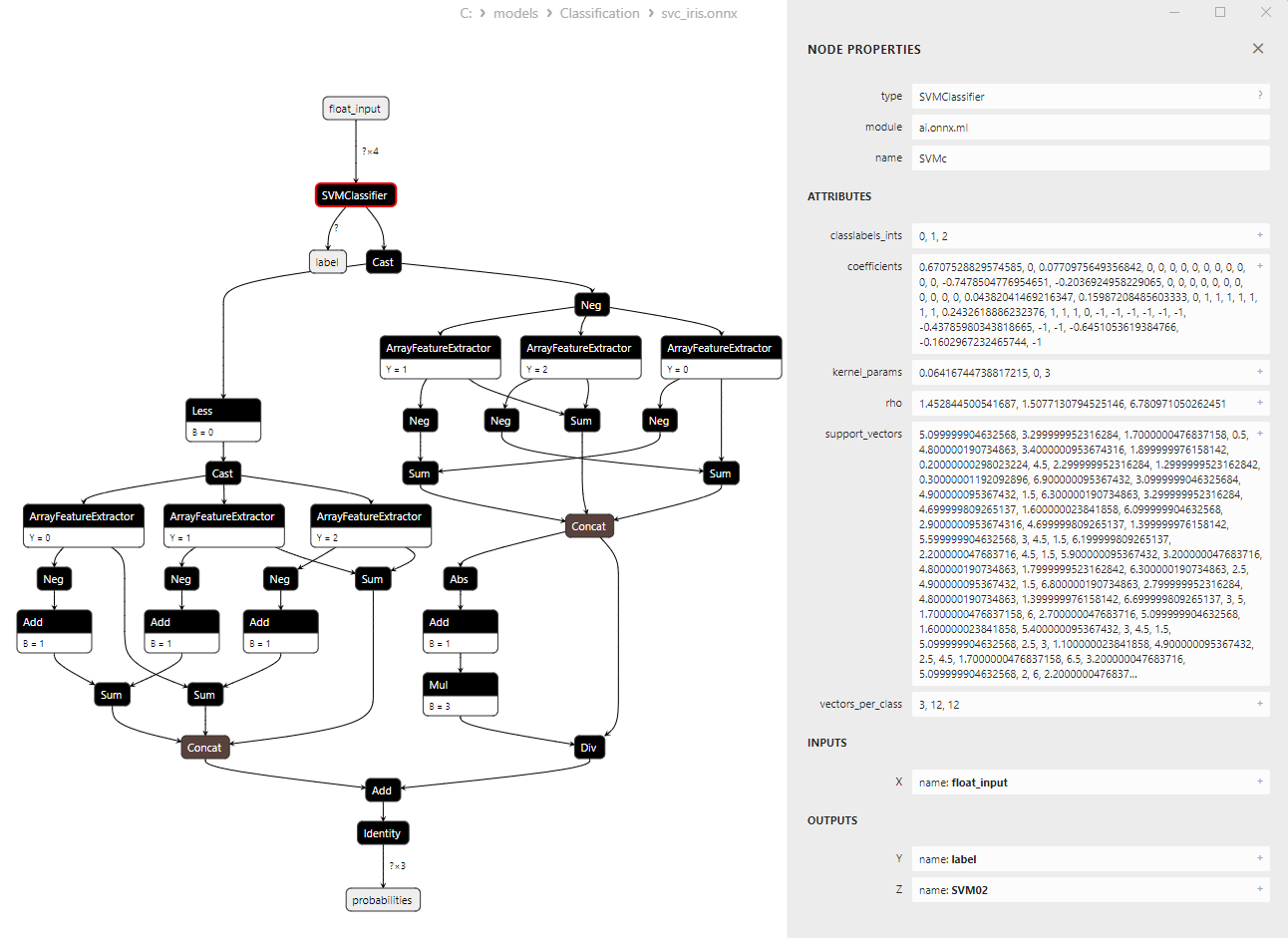

有关模型架构的更多详细信息,您可以使用 Netron。为此,请单击 MetaEditor 中模型描述中的“在 Netron 中打开”按钮。

图 14.Netron 中的 ONNX 模型 svc_iris.onnx

图 15.Netron 中的 ONNX 模型 svc_iris.onnx(SVMClassifier ONNX 运算符参数)

2.2.LinearSVC Classifier

LinearSVC(Linear Support Vector Classification,线性支持向量分类)是一种强大的机器学习算法,用于二元和多类分类任务。它基于寻找最佳分离数据的超平面的想法。

LinearSVC 的原理:

- 寻找最优超平面:LinearSVC 的主要思想是找到最大限度分离两类数据的最佳超平面。超平面是由线性方程定义的多维平面。

- 最小化间隔:LinearSVC 旨在最小化间隔(数据点和超平面之间的距离)。间隔越大,超平面分离类别的效果就越好。

- 处理线性不可分数据:由于使用了核函数(核技巧),LinearSVC 可以处理原始特征空间中无法线性分离的数据,该核函数将数据投影到可以线性分离的高维空间中。

LinearSVC 的优点:

- 很好的泛化:LinearSVC 具有良好的泛化能力,在新的、未见过的数据上能表现良好。

- 效率:LinearSVC 能够快速处理大型数据集,并且需要的计算资源相对较少。

- 处理线性不可分数据:使用核函数,LinearSVC 可以解决具有线性不可分数据的分类任务。

- 可扩展性:LinearSVC 可以高效地用于具有大量特征和海量数据的任务。

LinearSVC 的局限性:

- 仅线性分离超平面:LinearSVC 仅构建线性分离超平面,这对于具有非线性依赖关系的复杂分类任务可能不够用。

- 参数选择:选择正确的参数(例如,正则化参数)可能需要专家知识或交叉验证。

- 对异常值的敏感性:LinearSVC 对数据中的异常值很敏感,这会影响分类质量。

- 模型可解释性:与其他一些方法相比,使用 LinearSVC 创建的模型可能不太容易解释。

LinearSVC 是一种强大的分类算法,具有出色的泛化能力、效率以及处理线性不可分数据的能力。它可用于各种分类任务,尤其是当数据可以通过线性超平面分离时。然而,对于需要建模非线性依赖关系的复杂任务,LinearSVC 可能不太合适,在这种情况下,应该考虑具有更复杂决策边界的替代方法。

2.2.1.创建 LinearSVC Classifier 模型的代码

此代码演示了在 Iris 数据集上训练 LinearSVC Classifier 模型、将其导出为 ONNX 格式以及使用 ONNX 模型执行分类的过程。它还评估了原始模型和 ONNX 模型的准确性。

# Iris_LinearSVC.py # The code demonstrates the process of training LinearSVC model on the Iris dataset, exporting it to ONNX format, and making predictions using the ONNX model. # It also evaluates the accuracy of both the original model and the ONNX model. # Copyright 2023, MetaQuotes Ltd. # https://www.mql5.com # import necessary libraries from sklearn import datasets from sklearn.svm import LinearSVC from sklearn.metrics import accuracy_score, classification_report from skl2onnx import convert_sklearn from skl2onnx.common.data_types import FloatTensorType import onnxruntime as ort import numpy as np from sys import argv # define the path for saving the model data_path = argv[0] last_index = data_path.rfind("\\") + 1 data_path = data_path[0:last_index] # load the Iris dataset iris = datasets.load_iris() X = iris.data y = iris.target # create a LinearSVC model linear_svc_model = LinearSVC(C=1.0, max_iter=10000) # train the model on the entire dataset linear_svc_model.fit(X, y) # predict classes for the entire dataset y_pred = linear_svc_model.predict(X) # evaluate the model's accuracy accuracy = accuracy_score(y, y_pred) print("Accuracy of LinearSVC model:", accuracy) # display the classification report print("\nClassification Report:\n", classification_report(y, y_pred)) # define the input data type initial_type = [('float_input', FloatTensorType([None, X.shape[1]]))] # export the model to ONNX format with float data type onnx_model = convert_sklearn(linear_svc_model, initial_types=initial_type, target_opset=12) # save the model to a file onnx_filename = data_path + "linear_svc_iris.onnx" with open(onnx_filename, "wb") as f: f.write(onnx_model.SerializeToString()) # print model path print(f"Model saved to {onnx_filename}") # load the ONNX model and make predictions onnx_session = ort.InferenceSession(onnx_filename) input_name = onnx_session.get_inputs()[0].name output_name = onnx_session.get_outputs()[0].name # display information about input tensors in ONNX print("\nInformation about input tensors in ONNX:") for i, input_tensor in enumerate(onnx_session.get_inputs()): print(f"{i + 1}. Name: {input_tensor.name}, Data Type: {input_tensor.type}, Shape: {input_tensor.shape}") # display information about output tensors in ONNX print("\nInformation about output tensors in ONNX:") for i, output_tensor in enumerate(onnx_session.get_outputs()): print(f"{i + 1}. Name: {output_tensor.name}, Data Type: {output_tensor.type}, Shape: {output_tensor.shape}") # convert data to floating-point format (float32) X_float32 = X.astype(np.float32) # predict classes for the entire dataset using ONNX y_pred_onnx = onnx_session.run([output_name], {input_name: X_float32})[0] # evaluate the accuracy of the ONNX model accuracy_onnx = accuracy_score(y, y_pred_onnx) print("\nAccuracy of LinearSVC model in ONNX format:", accuracy_onnx)

输出:

Python

Python Classification Report:

Python precision recall f1-score support

Python

Python 0 1.00 1.00 1.00 50

Python 1 0.96 0.94 0.95 50

Python 2 0.94 0.96 0.95 50

Python

Python accuracy 0.97 150

Python macro avg 0.97 0.97 0.97 150

Python weighted avg 0.97 0.97 0.97 150

Python

Python Model saved to C:\Users\user\AppData\Roaming\MetaQuotes\Terminal\D0E8209F77C8CF37AD8BF550E51FF075\MQL5\Scripts\linear_svc_iris.onnx

Python

Python Information about input tensors in ONNX:

Python 1.Name: float_input, Data Type: tensor(float), Shape: [None, 4]

Python

Python Information about output tensors in ONNX:

Python 1.Name: label, Data Type: tensor(int64), Shape: [None]

Python 2.Name: probabilities, Data Type: tensor(float), Shape: [None, 3]

Python

Python Accuracy of LinearSVC model in ONNX format:0.9666666666666667

2.2.2.用于处理 LinearSVC Classifier 模型的 MQL5 代码

//+------------------------------------------------------------------+ //| Iris_LinearSVC.mq5 | //| Copyright 2023, MetaQuotes Ltd. | //| https://www.mql5.com | //+------------------------------------------------------------------+ #property copyright "Copyright 2023, MetaQuotes Ltd." #property link "https://www.mql5.com" #property version "1.00" #include "iris.mqh" #resource "linear_svc_iris.onnx" as const uchar ExtModel[]; //+------------------------------------------------------------------+ //| Test IRIS dataset samples | //+------------------------------------------------------------------+ bool TestSamples(long model,float &input_data[][4], int &model_classes_id[]) { //--- check number of input samples ulong batch_size=input_data.Range(0); if(batch_size==0) return(false); //--- prepare output array ArrayResize(model_classes_id,(int)batch_size); //--- ulong input_shape[]= { batch_size, input_data.Range(1)}; OnnxSetInputShape(model,0,input_shape); //--- int output1[]; float output2[][3]; //--- ArrayResize(output1,(int)batch_size); ArrayResize(output2,(int)batch_size); //--- ulong output_shape[]= {batch_size}; OnnxSetOutputShape(model,0,output_shape); //--- ulong output_shape2[]= {batch_size,3}; OnnxSetOutputShape(model,1,output_shape2); //--- bool res=OnnxRun(model,ONNX_DEBUG_LOGS,input_data,output1,output2); //--- classes are ready in output1[k]; if(res) { for(int k=0; k<(int)batch_size; k++) model_classes_id[k]=output1[k]; } //--- return(res); } //+------------------------------------------------------------------+ //| Test all samples from IRIS dataset (150) | //| Here we test all samples with batch=1, sample by sample | //+------------------------------------------------------------------+ bool TestAllIrisDataset(const long model,const string model_name,double &model_accuracy) { sIRISsample iris_samples[]; //--- load dataset from file PrepareIrisDataset(iris_samples); //--- test int total_samples=ArraySize(iris_samples); if(total_samples==0) { Print("iris dataset not prepared"); return(false); } //--- show dataset for(int k=0; k<total_samples; k++) { //PrintFormat("%d (%.2f,%.2f,%.2f,%.2f) class %d (%s)",iris_samples[k].sample_id,iris_samples[k].features[0],iris_samples[k].features[1],iris_samples[k].features[2],iris_samples[k].features[3],iris_samples[k].class_id,iris_samples[k].class_name); } //--- array for output classes int model_output_classes_id[]; //--- check all Iris dataset samples int correct_results=0; for(int k=0; k<total_samples; k++) { //--- input array float iris_sample_input_data[1][4]; //--- prepare input data from kth iris sample dataset iris_sample_input_data[0][0]=(float)iris_samples[k].features[0]; iris_sample_input_data[0][1]=(float)iris_samples[k].features[1]; iris_sample_input_data[0][2]=(float)iris_samples[k].features[2]; iris_sample_input_data[0][3]=(float)iris_samples[k].features[3]; //--- run model bool res=TestSamples(model,iris_sample_input_data,model_output_classes_id); //--- check result if(res) { if(model_output_classes_id[0]==iris_samples[k].class_id) { correct_results++; } else { PrintFormat("model:%s sample=%d FAILED [class=%d, true class=%d] features=(%.2f,%.2f,%.2f,%.2f]",model_name,iris_samples[k].sample_id,model_output_classes_id[0],iris_samples[k].class_id,iris_samples[k].features[0],iris_samples[k].features[1],iris_samples[k].features[2],iris_samples[k].features[3]); } } } model_accuracy=1.0*correct_results/total_samples; //--- PrintFormat("model:%s correct results: %.2f%%",model_name,100*model_accuracy); //--- return(true); } //+------------------------------------------------------------------+ //| Here we test batch execution of the model | //+------------------------------------------------------------------+ bool TestBatchExecution(const long model,const string model_name,double &model_accuracy) { model_accuracy=0; //--- array for output classes int model_output_classes_id[]; int correct_results=0; int total_results=0; bool res=false; //--- run batch with 3 samples float input_data_batch3[3][4]= { {5.1f,3.5f,1.4f,0.2f}, // iris dataset sample id=1, Iris-setosa {6.3f,2.5f,4.9f,1.5f}, // iris dataset sample id=73, Iris-versicolor {6.3f,2.7f,4.9f,1.8f} // iris dataset sample id=124, Iris-virginica }; int correct_classes_batch3[3]= {0,1,2}; //--- run model res=TestSamples(model,input_data_batch3,model_output_classes_id); if(res) { //--- check result for(int j=0; j<ArraySize(model_output_classes_id); j++) { //--- check result if(model_output_classes_id[j]==correct_classes_batch3[j]) correct_results++; else { PrintFormat("model:%s FAILED [class=%d, true class=%d] features=(%.2f,%.2f,%.2f,%.2f)",model_name,model_output_classes_id[j],correct_classes_batch3[j],input_data_batch3[j][0],input_data_batch3[j][1],input_data_batch3[j][2],input_data_batch3[j][3]); } total_results++; } } else return(false); //--- run batch with 10 samples float input_data_batch10[10][4]= { {5.5f,3.5f,1.3f,0.2f}, // iris dataset sample id=37 (Iris-setosa) {4.9f,3.1f,1.5f,0.1f}, // iris dataset sample id=38 (Iris-setosa) {4.4f,3.0f,1.3f,0.2f}, // iris dataset sample id=39 (Iris-setosa) {5.0f,3.3f,1.4f,0.2f}, // iris dataset sample id=50 (Iris-setosa) {7.0f,3.2f,4.7f,1.4f}, // iris dataset sample id=51 (Iris-versicolor) {6.4f,3.2f,4.5f,1.5f}, // iris dataset sample id=52 (Iris-versicolor) {6.3f,3.3f,6.0f,2.5f}, // iris dataset sample id=101 (Iris-virginica) {5.8f,2.7f,5.1f,1.9f}, // iris dataset sample id=102 (Iris-virginica) {7.1f,3.0f,5.9f,2.1f}, // iris dataset sample id=103 (Iris-virginica) {6.3f,2.9f,5.6f,1.8f} // iris dataset sample id=104 (Iris-virginica) }; //--- correct classes for all 10 samples in the batch int correct_classes_batch10[10]= {0,0,0,0,1,1,2,2,2,2}; //--- run model res=TestSamples(model,input_data_batch10,model_output_classes_id); //--- check result if(res) { for(int j=0; j<ArraySize(model_output_classes_id); j++) { if(model_output_classes_id[j]==correct_classes_batch10[j]) correct_results++; else { double f1=input_data_batch10[j][0]; double f2=input_data_batch10[j][1]; double f3=input_data_batch10[j][2]; double f4=input_data_batch10[j][3]; PrintFormat("model:%s FAILED [class=%d, true class=%d] features=(%.2f,%.2f,%.2f,%.2f)",model_name,model_output_classes_id[j],correct_classes_batch10[j],input_data_batch10[j][0],input_data_batch10[j][1],input_data_batch10[j][2],input_data_batch10[j][3]); } total_results++; } } else return(false); //--- calculate accuracy model_accuracy=correct_results/total_results; //--- return(res); } //+------------------------------------------------------------------+ //| Script program start function | //+------------------------------------------------------------------+ int OnStart(void) { string model_name="LinearSVC"; //--- long model=OnnxCreateFromBuffer(ExtModel,ONNX_DEFAULT); if(model==INVALID_HANDLE) { PrintFormat("model_name=%s OnnxCreate error %d for",model_name,GetLastError()); } else { //--- test all dataset double model_accuracy=0; //-- test sample by sample execution for all Iris dataset if(TestAllIrisDataset(model,model_name,model_accuracy)) PrintFormat("model=%s all samples accuracy=%f",model_name,model_accuracy); else PrintFormat("error in testing model=%s ",model_name); //--- test batch execution for several samples if(TestBatchExecution(model,model_name,model_accuracy)) PrintFormat("model=%s batch test accuracy=%f",model_name,model_accuracy); else PrintFormat("error in testing model=%s ",model_name); //--- release model OnnxRelease(model); } return(0); } //+------------------------------------------------------------------+

输出:

Iris_LinearSVC (EURUSD,H1) model:LinearSVC sample=71 FAILED [class=2, true class=1] features=(5.90,3.20,4.80,1.80] Iris_LinearSVC (EURUSD,H1) model:LinearSVC sample=84 FAILED [class=2, true class=1] features=(6.00,2.70,5.10,1.60] Iris_LinearSVC (EURUSD,H1) model:LinearSVC sample=85 FAILED [class=2, true class=1] features=(5.40,3.00,4.50,1.50] Iris_LinearSVC (EURUSD,H1) model:LinearSVC sample=130 FAILED [class=1, true class=2] features=(7.20,3.00,5.80,1.60] Iris_LinearSVC (EURUSD,H1) model:LinearSVC sample=134 FAILED [class=1, true class=2] features=(6.30,2.80,5.10,1.50] Iris_LinearSVC (EURUSD,H1) model:LinearSVC correct results: 96.67% Iris_LinearSVC (EURUSD,H1) model=LinearSVC all samples accuracy=0.966667 Iris_LinearSVC (EURUSD,H1) model=LinearSVC batch test accuracy=1.000000

导出的 ONNX 模型在完整 Iris 数据集上的准确率为 96.67%,与原始模型的准确率一致。

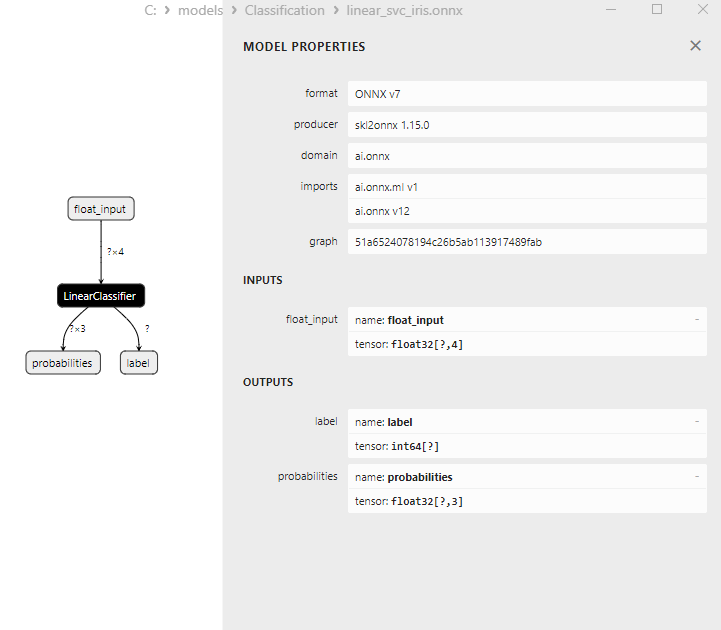

2.2.3.LinearSVC Classifier 模型的 ONNX 表示

图 16.Netron 中 LinearSVC Classifier 模型的 ONNX 表示

2.3.NuSVC Classifier

Nu-Support Vector Classification (NuSVC,核支持向量分类 ) 方法是一种基于支持向量机 (SVM) 方法的强大的机器学习算法。

NuSVC 的原理:

- 支持向量机(SVM):NuSVC 是 SVM 的变体,用于二元和多类分类任务。SVM的核心原理就是寻找最优分离超平面,在保持最大间隔的同时,最大限度地分离类别。

- Nu 参数:NuSVC 中的一个关键参数是 Nu 参数(nu),它控制模型的复杂性并定义可用作支持向量和误差的样本的比例。Nu 的值范围是 0 到 1,其中 0.5 表示大约一半的样本将被用作支持向量和误差。

- 参数调整:确定 Nu 参数和其他超参数的最佳值可能需要交叉验证并在训练数据中搜索最佳值。

- 核函数:NuSVC 可以使用各种核函数,例如线性、径向基函数 (RBF)、多项式等。核函数决定如何变换特征空间以找到分离超平面。

NuSVC 的优点:

- 高维空间中的效率:NuSVC 可以在高维空间中高效工作,适合具有大量特征的任务。

- 对异常值的鲁棒性:由于使用了支持向量,SVM,尤其是 NuSVC,对数据中的异常值具有很强的鲁棒性。

- 模型复杂度的控制:Nu 参数可以控制模型复杂性并平衡数据拟合和泛化。

- 良好的泛化:尤其是 SVM 和 NuSVC,表现出了良好的泛化能力,在新的、以前从未见过的数据上有着优异的表现。

NuSVC 的局限性:

- 大数据量导致效率低下:由于计算复杂性,NuSVC 在对大量数据进行训练时效率低下。

- 所需参数调整:调整 Nu 参数和核函数可能需要时间和计算资源。

- 核函数线性:NuSVC 的有效性在很大程度上取决于核函数的选择,对于某些任务,可能需要尝试不同的函数。

- 模型可解释性:SVM 和 NuSVC 提供了出色的结果,但它们的模型解释起来可能很复杂,尤其是在使用非线性核函数时。

Nu-Support Vector Classification(NuSVC)是一种基于 SVM 的强大分类方法,具有对异常值的鲁棒性和良好的泛化性等优点。然而,它的有效性取决于参数和核函数的选择,并且对于大量数据来说可能效率低下。仔细选择参数并使方法适应特定的分类任务至关重要。

2.3.1.创建 NuSVC Classifier 模型的代码

此代码演示了在 Iris 数据集上训练 NuSVC Classifier 模型、将其导出为 ONNX 格式以及使用 ONNX 模型执行分类的过程。它还评估了原始模型和 ONNX 模型的准确性。

# Iris_NuSVC.py # The code demonstrates the process of training NuSVC model on the Iris dataset, exporting it to ONNX format, and making predictions using the ONNX model. # It also evaluates the accuracy of both the original model and the ONNX model. # Copyright 2023, MetaQuotes Ltd. # https://www.mql5.com # import necessary libraries from sklearn import datasets from sklearn.svm import NuSVC from sklearn.metrics import accuracy_score, classification_report from skl2onnx import convert_sklearn from skl2onnx.common.data_types import FloatTensorType import onnxruntime as ort import numpy as np from sys import argv # define the path for saving the model data_path = argv[0] last_index = data_path.rfind("\\") + 1 data_path = data_path[0:last_index] # load the Iris dataset iris = datasets.load_iris() X = iris.data y = iris.target # create a NuSVC model nusvc_model = NuSVC(nu=0.5, kernel='linear') # train the model on the entire dataset nusvc_model.fit(X, y) # predict classes for the entire dataset y_pred = nusvc_model.predict(X) # evaluate the model's accuracy accuracy = accuracy_score(y, y_pred) print("Accuracy of NuSVC model:", accuracy) # display the classification report print("\nClassification Report:\n", classification_report(y, y_pred)) # define the input data type initial_type = [('float_input', FloatTensorType([None, X.shape[1]]))] # export the model to ONNX format with float data type onnx_model = convert_sklearn(nusvc_model, initial_types=initial_type, target_opset=12) # save the model to a file onnx_filename = data_path + "nusvc_iris.onnx" with open(onnx_filename, "wb") as f: f.write(onnx_model.SerializeToString()) # print model path print(f"Model saved to {onnx_filename}") # load the ONNX model and make predictions onnx_session = ort.InferenceSession(onnx_filename) input_name = onnx_session.get_inputs()[0].name output_name = onnx_session.get_outputs()[0].name # display information about input tensors in ONNX print("\nInformation about input tensors in ONNX:") for i, input_tensor in enumerate(onnx_session.get_inputs()): print(f"{i + 1}. Name: {input_tensor.name}, Data Type: {input_tensor.type}, Shape: {input_tensor.shape}") # display information about output tensors in ONNX print("\nInformation about output tensors in ONNX:") for i, output_tensor in enumerate(onnx_session.get_outputs()): print(f"{i + 1}. Name: {output_tensor.name}, Data Type: {output_tensor.type}, Shape: {output_tensor.shape}") # convert data to floating-point format (float32) X_float32 = X.astype(np.float32) # predict classes for the entire dataset using ONNX y_pred_onnx = onnx_session.run([output_name], {input_name: X_float32})[0] # evaluate the accuracy of the ONNX model accuracy_onnx = accuracy_score(y, y_pred_onnx) print("\nAccuracy of NuSVC model in ONNX format:", accuracy_onnx)

输出:

Python

Python Classification Report:

Python precision recall f1-score support

Python

Python 0 1.00 1.00 1.00 50

Python 1 0.96 0.96 0.96 50

Python 2 0.96 0.96 0.96 50

Python

Python accuracy 0.97 150

Python macro avg 0.97 0.97 0.97 150

Python weighted avg 0.97 0.97 0.97 150

Python

Python Model saved to C:\Users\user\AppData\Roaming\MetaQuotes\Terminal\D0E8209F77C8CF37AD8BF550E51FF075\MQL5\Scripts\nusvc_iris.onnx

Python

Python Information about input tensors in ONNX:

Python 1.Name: float_input, Data Type: tensor(float), Shape: [None, 4]

Python

Python Information about output tensors in ONNX:

Python 1.Name: label, Data Type: tensor(int64), Shape: [None]

Python 2.Name: probabilities, Data Type: tensor(float), Shape: [None, 3]

Python

Python Accuracy of NuSVC model in ONNX format:0.9733333333333334

2.3.2.用于处理 NuSVC Classifier 模型的 MQL5 代码

//+------------------------------------------------------------------+ //| Iris_NuSVC.mq5 | //| Copyright 2023, MetaQuotes Ltd. | //| https://www.mql5.com | //+------------------------------------------------------------------+ #property copyright "Copyright 2023, MetaQuotes Ltd." #property link "https://www.mql5.com" #property version "1.00" #include "iris.mqh" #resource "nusvc_iris.onnx" as const uchar ExtModel[]; //+------------------------------------------------------------------+ //| Test IRIS dataset samples | //+------------------------------------------------------------------+ bool TestSamples(long model,float &input_data[][4], int &model_classes_id[]) { //--- check number of input samples ulong batch_size=input_data.Range(0); if(batch_size==0) return(false); //--- prepare output array ArrayResize(model_classes_id,(int)batch_size); //--- ulong input_shape[]= { batch_size, input_data.Range(1)}; OnnxSetInputShape(model,0,input_shape); //--- int output1[]; float output2[][3]; //--- ArrayResize(output1,(int)batch_size); ArrayResize(output2,(int)batch_size); //--- ulong output_shape[]= {batch_size}; OnnxSetOutputShape(model,0,output_shape); //--- ulong output_shape2[]= {batch_size,3}; OnnxSetOutputShape(model,1,output_shape2); //--- bool res=OnnxRun(model,ONNX_DEBUG_LOGS,input_data,output1,output2); //--- classes are ready in output1[k]; if(res) { for(int k=0; k<(int)batch_size; k++) model_classes_id[k]=output1[k]; } //--- return(res); } //+------------------------------------------------------------------+ //| Test all samples from IRIS dataset (150) | //| Here we test all samples with batch=1, sample by sample | //+------------------------------------------------------------------+ bool TestAllIrisDataset(const long model,const string model_name,double &model_accuracy) { sIRISsample iris_samples[]; //--- load dataset from file PrepareIrisDataset(iris_samples); //--- test int total_samples=ArraySize(iris_samples); if(total_samples==0) { Print("iris dataset not prepared"); return(false); } //--- show dataset for(int k=0; k<total_samples; k++) { //PrintFormat("%d (%.2f,%.2f,%.2f,%.2f) class %d (%s)",iris_samples[k].sample_id,iris_samples[k].features[0],iris_samples[k].features[1],iris_samples[k].features[2],iris_samples[k].features[3],iris_samples[k].class_id,iris_samples[k].class_name); } //--- array for output classes int model_output_classes_id[]; //--- check all Iris dataset samples int correct_results=0; for(int k=0; k<total_samples; k++) { //--- input array float iris_sample_input_data[1][4]; //--- prepare input data from kth iris sample dataset iris_sample_input_data[0][0]=(float)iris_samples[k].features[0]; iris_sample_input_data[0][1]=(float)iris_samples[k].features[1]; iris_sample_input_data[0][2]=(float)iris_samples[k].features[2]; iris_sample_input_data[0][3]=(float)iris_samples[k].features[3]; //--- run model bool res=TestSamples(model,iris_sample_input_data,model_output_classes_id); //--- check result if(res) { if(model_output_classes_id[0]==iris_samples[k].class_id) { correct_results++; } else { PrintFormat("model:%s sample=%d FAILED [class=%d, true class=%d] features=(%.2f,%.2f,%.2f,%.2f]",model_name,iris_samples[k].sample_id,model_output_classes_id[0],iris_samples[k].class_id,iris_samples[k].features[0],iris_samples[k].features[1],iris_samples[k].features[2],iris_samples[k].features[3]); } } } model_accuracy=1.0*correct_results/total_samples; //--- PrintFormat("model:%s correct results: %.2f%%",model_name,100*model_accuracy); //--- return(true); } //+------------------------------------------------------------------+ //| Here we test batch execution of the model | //+------------------------------------------------------------------+ bool TestBatchExecution(const long model,const string model_name,double &model_accuracy) { model_accuracy=0; //--- array for output classes int model_output_classes_id[]; int correct_results=0; int total_results=0; bool res=false; //--- run batch with 3 samples float input_data_batch3[3][4]= { {5.1f,3.5f,1.4f,0.2f}, // iris dataset sample id=1, Iris-setosa {6.3f,2.5f,4.9f,1.5f}, // iris dataset sample id=73, Iris-versicolor {6.3f,2.7f,4.9f,1.8f} // iris dataset sample id=124, Iris-virginica }; int correct_classes_batch3[3]= {0,1,2}; //--- run model res=TestSamples(model,input_data_batch3,model_output_classes_id); if(res) { //--- check result for(int j=0; j<ArraySize(model_output_classes_id); j++) { //--- check result if(model_output_classes_id[j]==correct_classes_batch3[j]) correct_results++; else { PrintFormat("model:%s FAILED [class=%d, true class=%d] features=(%.2f,%.2f,%.2f,%.2f)",model_name,model_output_classes_id[j],correct_classes_batch3[j],input_data_batch3[j][0],input_data_batch3[j][1],input_data_batch3[j][2],input_data_batch3[j][3]); } total_results++; } } else return(false); //--- run batch with 10 samples float input_data_batch10[10][4]= { {5.5f,3.5f,1.3f,0.2f}, // iris dataset sample id=37 (Iris-setosa) {4.9f,3.1f,1.5f,0.1f}, // iris dataset sample id=38 (Iris-setosa) {4.4f,3.0f,1.3f,0.2f}, // iris dataset sample id=39 (Iris-setosa) {5.0f,3.3f,1.4f,0.2f}, // iris dataset sample id=50 (Iris-setosa) {7.0f,3.2f,4.7f,1.4f}, // iris dataset sample id=51 (Iris-versicolor) {6.4f,3.2f,4.5f,1.5f}, // iris dataset sample id=52 (Iris-versicolor) {6.3f,3.3f,6.0f,2.5f}, // iris dataset sample id=101 (Iris-virginica) {5.8f,2.7f,5.1f,1.9f}, // iris dataset sample id=102 (Iris-virginica) {7.1f,3.0f,5.9f,2.1f}, // iris dataset sample id=103 (Iris-virginica) {6.3f,2.9f,5.6f,1.8f} // iris dataset sample id=104 (Iris-virginica) }; //--- correct classes for all 10 samples in the batch int correct_classes_batch10[10]= {0,0,0,0,1,1,2,2,2,2}; //--- run model res=TestSamples(model,input_data_batch10,model_output_classes_id); //--- check result if(res) { for(int j=0; j<ArraySize(model_output_classes_id); j++) { if(model_output_classes_id[j]==correct_classes_batch10[j]) correct_results++; else { double f1=input_data_batch10[j][0]; double f2=input_data_batch10[j][1]; double f3=input_data_batch10[j][2]; double f4=input_data_batch10[j][3]; PrintFormat("model:%s FAILED [class=%d, true class=%d] features=(%.2f,%.2f,%.2f,%.2f)",model_name,model_output_classes_id[j],correct_classes_batch10[j],input_data_batch10[j][0],input_data_batch10[j][1],input_data_batch10[j][2],input_data_batch10[j][3]); } total_results++; } } else return(false); //--- calculate accuracy model_accuracy=correct_results/total_results; //--- return(res); } //+------------------------------------------------------------------+ //| Script program start function | //+------------------------------------------------------------------+ int OnStart(void) { string model_name="NuSVC"; //--- long model=OnnxCreateFromBuffer(ExtModel,ONNX_DEFAULT); if(model==INVALID_HANDLE) { PrintFormat("model_name=%s OnnxCreate error %d for",model_name,GetLastError()); } else { //--- test all dataset double model_accuracy=0; //-- test sample by sample execution for all Iris dataset if(TestAllIrisDataset(model,model_name,model_accuracy)) PrintFormat("model=%s all samples accuracy=%f",model_name,model_accuracy); else PrintFormat("error in testing model=%s ",model_name); //--- test batch execution for several samples if(TestBatchExecution(model,model_name,model_accuracy)) PrintFormat("model=%s batch test accuracy=%f",model_name,model_accuracy); else PrintFormat("error in testing model=%s ",model_name); //--- release model OnnxRelease(model); } return(0); } //+------------------------------------------------------------------+

输出:

Iris_NuSVC (EURUSD,H1) model:NuSVC sample=78 FAILED [class=2, true class=1] features=(6.70,3.00,5.00,1.70] Iris_NuSVC (EURUSD,H1) model:NuSVC sample=84 FAILED [class=2, true class=1] features=(6.00,2.70,5.10,1.60] Iris_NuSVC (EURUSD,H1) model:NuSVC sample=107 FAILED [class=1, true class=2] features=(4.90,2.50,4.50,1.70] Iris_NuSVC (EURUSD,H1) model:NuSVC sample=139 FAILED [class=1, true class=2] features=(6.00,3.00,4.80,1.80] Iris_NuSVC (EURUSD,H1) model:NuSVC correct results: 97.33% Iris_NuSVC (EURUSD,H1) model=NuSVC all samples accuracy=0.973333 Iris_NuSVC (EURUSD,H1) model=NuSVC batch test accuracy=1.000000

导出的 ONNX 模型在完整 Iris 数据集上的准确率为 97.33%,与原始模型的准确率一致。

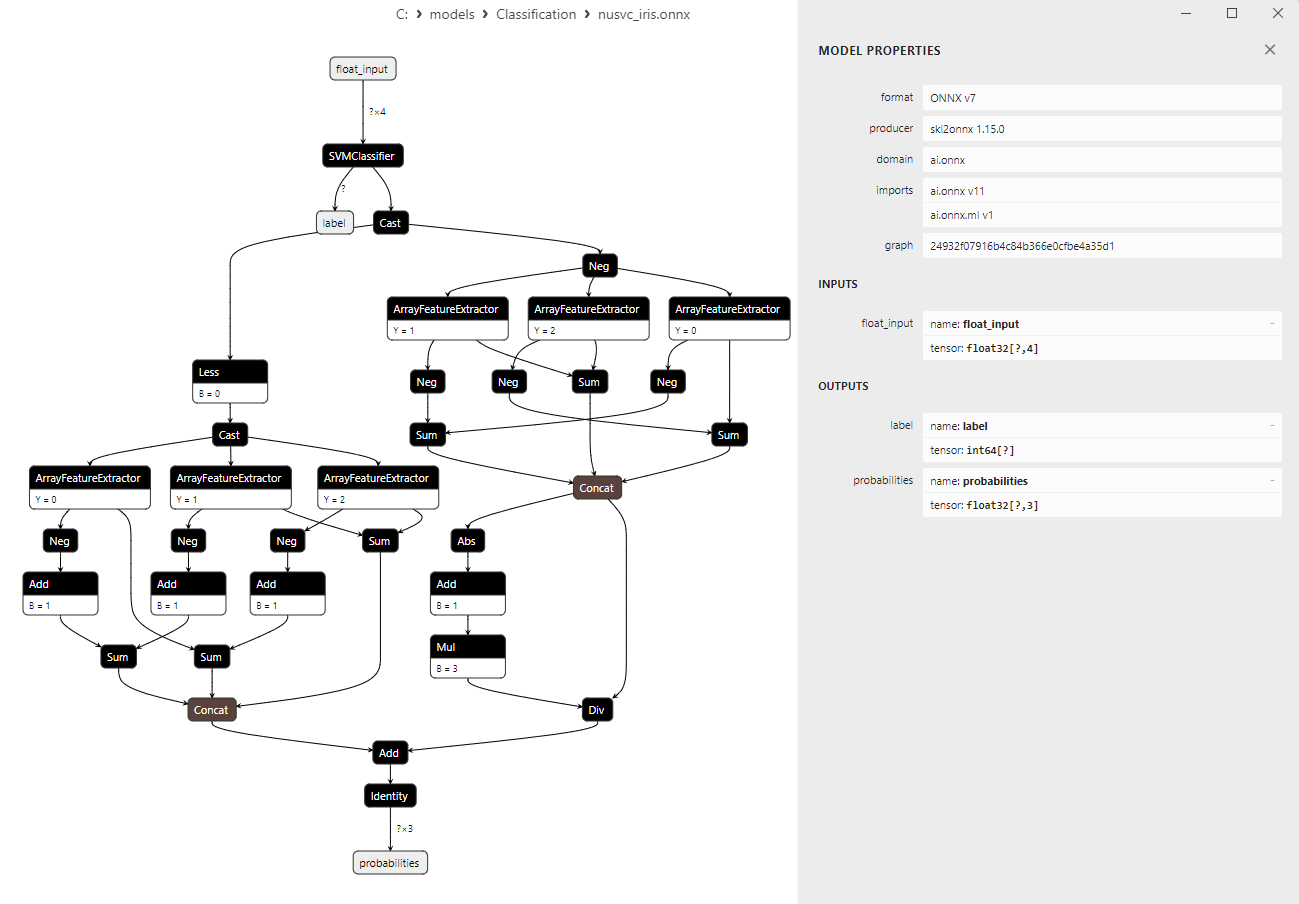

2.3.3.NuSVC Classifier 模型的 ONNX 表示

图 17.Netron 中 NuSVC Classifier 模型的 ONNX 表示

2.4.Radius Neighbors Classifier

Radius Neighbors Classifier(半径邻域分类器)是一种基于对象之间接近度原理进行分类任务的机器学习方法。与经典的 K-Nearest Neighbors (K-NN) Classifier 不同,在 K-NN Classifier 中选择的是固定数量的最近邻,而在 Radius Neighbors Classifier中,对象是根据与指定半径内的最近邻的距离进行分类的。Radius Neighbors Classifier 的原理:

- 确定半径:Radius Neighbors Classifier 的主要参数是半径,它定义了一个对象与其邻居之间的最大距离,以使其被认为接近邻居的类别。

- 寻找最近邻居:计算每个对象与训练数据集中所有其他对象的距离。位于指定半径内的物体被视为该物体的邻居。

- 投票:Radius Neighbors Classifier 使用邻居之间的多数投票来确定对象的类别。例如,如果大多数邻居属于 A 类,则该对象也将被归类为 A 类。

- 对数据密度的适应性:Radius Neighbors Classifier 适用于不同特征空间区域中的数据密度可能变化的任务。

- 能够与不同的类别形状一起工作:该方法在类别具有复杂和非线性形状的任务中表现良好。

- 适用于有异常值的数据:Radius Neighbors Classifier 对异常值的鲁棒性比 K-NN 更强,因为它忽略了位于指定半径之外的邻居。

- 对半径选择的敏感性:选择最佳半径值并非易事,需要进行调整。

- 大型数据集上的效率低下:对于大型数据集,计算到所有对象的距离可能会非常耗费计算资源。

- 对数据密度的依赖:当数据在特征空间中密度不均匀时,该方法可能效率较低。

在对象接近度很重要且类别形状可能很复杂的情况下,Radius Neighbors Classifier 是一种很有价值的机器学习方法。它可以应用于各个领域,包括图像分析、自然语言处理等。

2.4.1.创建 Radius Neighbors Classifier 模型的代码

此代码演示了在 Iris 数据集上训练 Radius Neighbors Classifier 模型、以 ONNX 格式导出以及使用 ONNX 模型执行分类的过程。它还评估了原始模型和 ONNX 模型的准确性。

# Iris_RadiusNeighborsClassifier.py # The code demonstrates the process of training an Radius Neughbors model on the Iris dataset, exporting it to ONNX format, and making predictions using the ONNX model. # It also evaluates the accuracy of both the original model and the ONNX model. # Copyright 2023 MetaQuotes Ltd. # https://www.mql5.com # import necessary libraries from sklearn import datasets from sklearn.neighbors import RadiusNeighborsClassifier from sklearn.metrics import accuracy_score, classification_report from skl2onnx import convert_sklearn from skl2onnx.common.data_types import FloatTensorType import onnxruntime as ort import numpy as np from sys import argv # define the path for saving the model data_path = argv[0] last_index = data_path.rfind("\\") + 1 data_path = data_path[0:last_index] # load the Iris dataset iris = datasets.load_iris() X = iris.data y = iris.target # create a Radius Neighbors Classifier model radius_model = RadiusNeighborsClassifier(radius=1.0) # train the model on the entire dataset radius_model.fit(X, y) # predict classes for the entire dataset y_pred = radius_model.predict(X) # evaluate the model's accuracy accuracy = accuracy_score(y, y_pred) print("Accuracy of Radius Neighbors Classifier model:", accuracy) # display the classification report print("\nClassification Report:\n", classification_report(y, y_pred)) # define the input data type initial_type = [('float_input', FloatTensorType([None, X.shape[1]]))] # export the model to ONNX format with float data type onnx_model = convert_sklearn(radius_model, initial_types=initial_type, target_opset=12) # save the model to a file onnx_filename = data_path + "radius_neighbors_iris.onnx" with open(onnx_filename, "wb") as f: f.write(onnx_model.SerializeToString()) # print model path print(f"Model saved to {onnx_filename}") # load the ONNX model and make predictions onnx_session = ort.InferenceSession(onnx_filename) input_name = onnx_session.get_inputs()[0].name output_name = onnx_session.get_outputs()[0].name # display information about input tensors in ONNX print("\nInformation about input tensors in ONNX:") for i, input_tensor in enumerate(onnx_session.get_inputs()): print(f"{i + 1}. Name: {input_tensor.name}, Data Type: {input_tensor.type}, Shape: {input_tensor.shape}") # display information about output tensors in ONNX print("\nInformation about output tensors in ONNX:") for i, output_tensor in enumerate(onnx_session.get_outputs()): print(f"{i + 1}. Name: {output_tensor.name}, Data Type: {output_tensor.type}, Shape: {output_tensor.shape}") # convert data to floating-point format (float32) X_float32 = X.astype(np.float32) # predict classes for the entire dataset using ONNX y_pred_onnx = onnx_session.run([output_name], {input_name: X_float32})[0] # evaluate the accuracy of the ONNX model accuracy_onnx = accuracy_score(y, y_pred_onnx) print("\nAccuracy of Radius Neighbors Classifier model in ONNX format:", accuracy_onnx)

脚本 Iris_RadiusNeighbors.py 的结果:

Python

Python Classification Report:

Python precision recall f1-score support

Python

Python 0 1.00 1.00 1.00 50

Python 1 0.94 0.98 0.96 50

Python 2 0.98 0.94 0.96 50

Python

Python accuracy 0.97 150

Python macro avg 0.97 0.97 0.97 150

Python weighted avg 0.97 0.97 0.97 150

Python

Python Model saved to C:\Users\user\AppData\Roaming\MetaQuotes\Terminal\D0E8209F77C8CF37AD8BF550E51FF075\MQL5\Scripts\radius_neighbors_iris.onnx

Python

Python Information about input tensors in ONNX:

Python 1.Name: float_input, Data Type: tensor(float), Shape: [None, 4]

Python

Python Information about output tensors in ONNX:

Python 1.Name: label, Data Type: tensor(int64), Shape: [None]

Python 2.Name: probabilities, Data Type: tensor(float), Shape: [None, 3]

Python

Python Accuracy of Radius Neighbors Classifier model in ONNX format:0.9733333333333334

原始模型的精度与以ONNX格式导出的模型的精度相同。

2.4.2.用于处理 Radius Neighbors Classifier 模型的 MQL5 代码

//+------------------------------------------------------------------+ //| Iris_RadiusNeighborsClassifier.mq5 | //| Copyright 2023, MetaQuotes Ltd. | //| https://www.mql5.com | //+------------------------------------------------------------------+ #property copyright "Copyright 2023, MetaQuotes Ltd." #property link "https://www.mql5.com" #property version "1.00" #include "iris.mqh" #resource "radius_neighbors_iris.onnx" as const uchar ExtModel[]; //+------------------------------------------------------------------+ //| Test IRIS dataset samples | //+------------------------------------------------------------------+ bool TestSamples(long model,float &input_data[][4], int &model_classes_id[]) { //--- check number of input samples ulong batch_size=input_data.Range(0); if(batch_size==0) return(false); //--- prepare output array ArrayResize(model_classes_id,(int)batch_size); //--- ulong input_shape[]= { batch_size, input_data.Range(1)}; OnnxSetInputShape(model,0,input_shape); //--- int output1[]; float output2[][3]; //--- ArrayResize(output1,(int)batch_size); ArrayResize(output2,(int)batch_size); //--- ulong output_shape[]= {batch_size}; OnnxSetOutputShape(model,0,output_shape); //--- ulong output_shape2[]= {batch_size,3}; OnnxSetOutputShape(model,1,output_shape2); //--- bool res=OnnxRun(model,ONNX_DEBUG_LOGS,input_data,output1,output2); //--- classes are ready in output1[k]; if(res) { for(int k=0; k<(int)batch_size; k++) model_classes_id[k]=output1[k]; } //--- return(res); } //+------------------------------------------------------------------+ //| Test all samples from IRIS dataset (150) | //| Here we test all samples with batch=1, sample by sample | //+------------------------------------------------------------------+ bool TestAllIrisDataset(const long model,const string model_name,double &model_accuracy) { sIRISsample iris_samples[]; //--- load dataset from file PrepareIrisDataset(iris_samples); //--- test int total_samples=ArraySize(iris_samples); if(total_samples==0) { Print("iris dataset not prepared"); return(false); } //--- show dataset for(int k=0; k<total_samples; k++) { //PrintFormat("%d (%.2f,%.2f,%.2f,%.2f) class %d (%s)",iris_samples[k].sample_id,iris_samples[k].features[0],iris_samples[k].features[1],iris_samples[k].features[2],iris_samples[k].features[3],iris_samples[k].class_id,iris_samples[k].class_name); } //--- array for output classes int model_output_classes_id[]; //--- check all Iris dataset samples int correct_results=0; for(int k=0; k<total_samples; k++) { //--- input array float iris_sample_input_data[1][4]; //--- prepare input data from kth iris sample dataset iris_sample_input_data[0][0]=(float)iris_samples[k].features[0]; iris_sample_input_data[0][1]=(float)iris_samples[k].features[1]; iris_sample_input_data[0][2]=(float)iris_samples[k].features[2]; iris_sample_input_data[0][3]=(float)iris_samples[k].features[3]; //--- run model bool res=TestSamples(model,iris_sample_input_data,model_output_classes_id); //--- check result if(res) { if(model_output_classes_id[0]==iris_samples[k].class_id) { correct_results++; } else { PrintFormat("model:%s sample=%d FAILED [class=%d, true class=%d] features=(%.2f,%.2f,%.2f,%.2f]",model_name,iris_samples[k].sample_id,model_output_classes_id[0],iris_samples[k].class_id,iris_samples[k].features[0],iris_samples[k].features[1],iris_samples[k].features[2],iris_samples[k].features[3]); } } } model_accuracy=1.0*correct_results/total_samples; //--- PrintFormat("model:%s correct results: %.2f%%",model_name,100*model_accuracy); //--- return(true); } //+------------------------------------------------------------------+ //| Here we test batch execution of the model | //+------------------------------------------------------------------+ bool TestBatchExecution(const long model,const string model_name,double &model_accuracy) { model_accuracy=0; //--- array for output classes int model_output_classes_id[]; int correct_results=0; int total_results=0; bool res=false; //--- run batch with 3 samples float input_data_batch3[3][4]= { {5.1f,3.5f,1.4f,0.2f}, // iris dataset sample id=1, Iris-setosa {6.3f,2.5f,4.9f,1.5f}, // iris dataset sample id=73, Iris-versicolor {6.3f,2.7f,4.9f,1.8f} // iris dataset sample id=124, Iris-virginica }; int correct_classes_batch3[3]= {0,1,2}; //--- run model res=TestSamples(model,input_data_batch3,model_output_classes_id); if(res) { //--- check result for(int j=0; j<ArraySize(model_output_classes_id); j++) { //--- check result if(model_output_classes_id[j]==correct_classes_batch3[j]) correct_results++; else { PrintFormat("model:%s FAILED [class=%d, true class=%d] features=(%.2f,%.2f,%.2f,%.2f)",model_name,model_output_classes_id[j],correct_classes_batch3[j],input_data_batch3[j][0],input_data_batch3[j][1],input_data_batch3[j][2],input_data_batch3[j][3]); } total_results++; } } else return(false); //--- run batch with 10 samples float input_data_batch10[10][4]= { {5.5f,3.5f,1.3f,0.2f}, // iris dataset sample id=37 (Iris-setosa) {4.9f,3.1f,1.5f,0.1f}, // iris dataset sample id=38 (Iris-setosa) {4.4f,3.0f,1.3f,0.2f}, // iris dataset sample id=39 (Iris-setosa) {5.0f,3.3f,1.4f,0.2f}, // iris dataset sample id=50 (Iris-setosa) {7.0f,3.2f,4.7f,1.4f}, // iris dataset sample id=51 (Iris-versicolor) {6.4f,3.2f,4.5f,1.5f}, // iris dataset sample id=52 (Iris-versicolor) {6.3f,3.3f,6.0f,2.5f}, // iris dataset sample id=101 (Iris-virginica) {5.8f,2.7f,5.1f,1.9f}, // iris dataset sample id=102 (Iris-virginica) {7.1f,3.0f,5.9f,2.1f}, // iris dataset sample id=103 (Iris-virginica) {6.3f,2.9f,5.6f,1.8f} // iris dataset sample id=104 (Iris-virginica) }; //--- correct classes for all 10 samples in the batch int correct_classes_batch10[10]= {0,0,0,0,1,1,2,2,2,2}; //--- run model res=TestSamples(model,input_data_batch10,model_output_classes_id); //--- check result if(res) { for(int j=0; j<ArraySize(model_output_classes_id); j++) { if(model_output_classes_id[j]==correct_classes_batch10[j]) correct_results++; else { double f1=input_data_batch10[j][0]; double f2=input_data_batch10[j][1]; double f3=input_data_batch10[j][2]; double f4=input_data_batch10[j][3]; PrintFormat("model:%s FAILED [class=%d, true class=%d] features=(%.2f,%.2f,%.2f,%.2f)",model_name,model_output_classes_id[j],correct_classes_batch10[j],input_data_batch10[j][0],input_data_batch10[j][1],input_data_batch10[j][2],input_data_batch10[j][3]); } total_results++; } } else return(false); //--- calculate accuracy model_accuracy=correct_results/total_results; //--- return(res); } //+------------------------------------------------------------------+ //| Script program start function | //+------------------------------------------------------------------+ int OnStart(void) { string model_name="RadiusNeighborsClassifier"; //--- long model=OnnxCreateFromBuffer(ExtModel,ONNX_DEFAULT); if(model==INVALID_HANDLE) { PrintFormat("model_name=%s OnnxCreate error %d for",model_name,GetLastError()); } else { //--- test all dataset double model_accuracy=0; //-- test sample by sample execution for all Iris dataset if(TestAllIrisDataset(model,model_name,model_accuracy)) PrintFormat("model=%s all samples accuracy=%f",model_name,model_accuracy); else PrintFormat("error in testing model=%s ",model_name); //--- test batch execution for several samples if(TestBatchExecution(model,model_name,model_accuracy)) PrintFormat("model=%s batch test accuracy=%f",model_name,model_accuracy); else PrintFormat("error in testing model=%s ",model_name); //--- release model OnnxRelease(model); } return(0); } //+------------------------------------------------------------------+

输出:

Iris_RadiusNeighborsClassifier (EURUSD,H1) model:RadiusNeighborsClassifier sample=78 FAILED [class=2, true class=1] features=(6.70,3.00,5.00,1.70] Iris_RadiusNeighborsClassifier (EURUSD,H1) model:RadiusNeighborsClassifier sample=107 FAILED [class=1, true class=2] features=(4.90,2.50,4.50,1.70] Iris_RadiusNeighborsClassifier (EURUSD,H1) model:RadiusNeighborsClassifier sample=127 FAILED [class=1, true class=2] features=(6.20,2.80,4.80,1.80] Iris_RadiusNeighborsClassifier (EURUSD,H1) model:RadiusNeighborsClassifier sample=139 FAILED [class=1, true class=2] features=(6.00,3.00,4.80,1.80] Iris_RadiusNeighborsClassifier (EURUSD,H1) model:RadiusNeighborsClassifier correct results: 97.33% Iris_RadiusNeighborsClassifier (EURUSD,H1) model=RadiusNeighborsClassifier all samples accuracy=0.973333 Iris_RadiusNeighborsClassifier (EURUSD,H1) model=RadiusNeighborsClassifier batch test accuracy=1.000000

Radius Neighbors Classifier 模型的准确率为 97.33%,分类错误数为 4 个(样本 78、107、127 和 139)。

导出的 ONNX 模型在完整 Iris 数据集上的准确率为 97.33%,与原始模型的准确率相匹配。

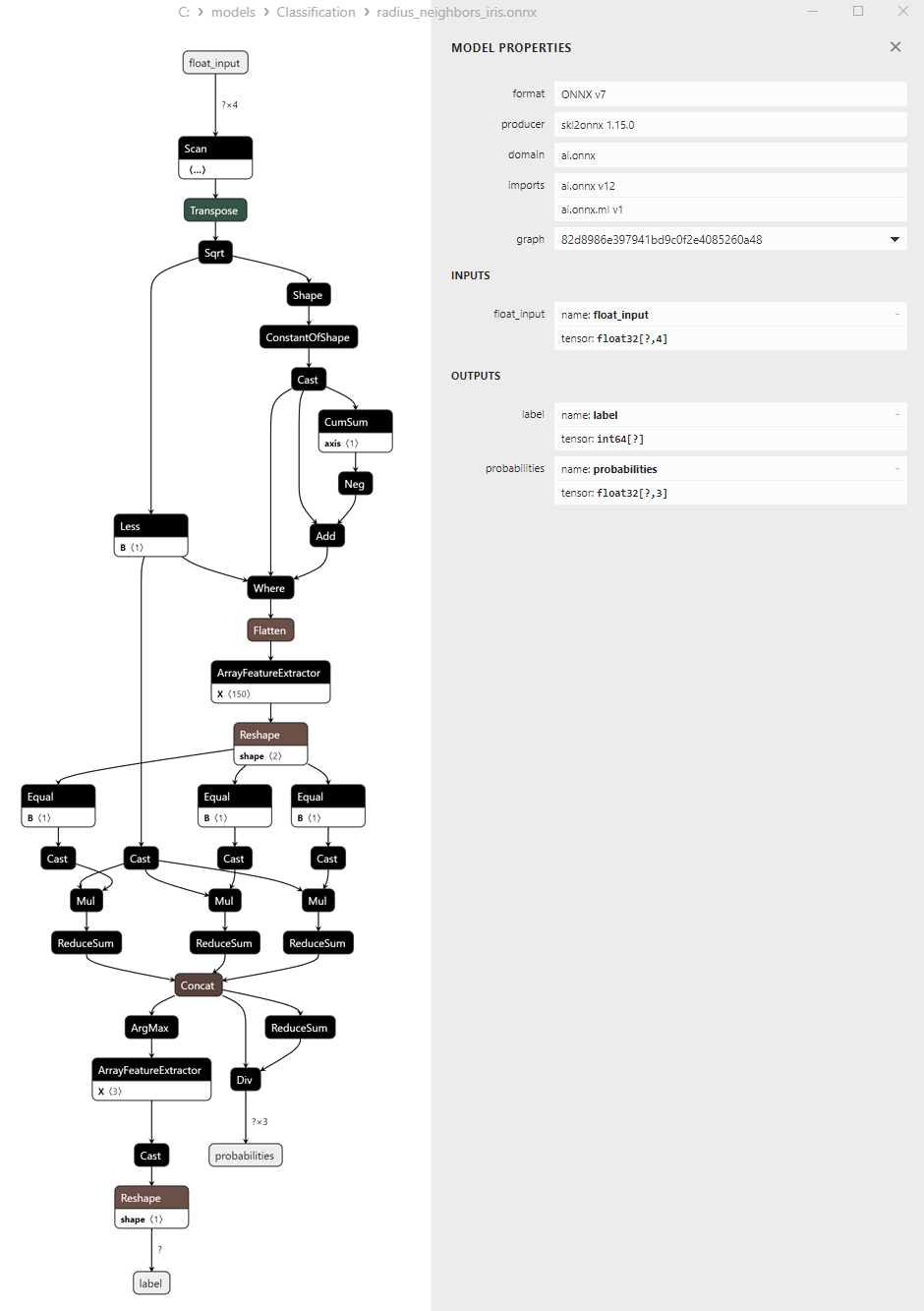

2.4.3. Radius Neighbors Classifier 模型的 ONNX 表示

图 18.Netron 中 Radius Neighbors Classifier 的 ONNX 表示

关于 RidgeClassifier 和 RidgeClassifierCV 方法的注意事项

RidgeClassifier 和 RidgeClassifierCV 是两种基于 Ridge Regression(岭回归)的分类方法,但它们在参数调整和超参数自动选择的方式上有所不同:

RidgeClassifier:

- RidgeClassifier 是一种基于 Ridge Regression 的分类方法,用于二元和多类分类任务。

- 在多类分类的情况下,RidgeClassifier 将任务转换为多个二元任务(一对多)并为每个任务建立一个模型。

- 正则化参数 alpha 需要用户手动调整,这意味着您必须通过实验或分析验证数据来选择最佳的 alpha 值。

RidgeClassifierCV: