Machine learning in trading: theory, models, practice and algo-trading - page 3336

You are missing trading opportunities:

- Free trading apps

- Over 8,000 signals for copying

- Economic news for exploring financial markets

Registration

Log in

You agree to website policy and terms of use

If you do not have an account, please register

Another funny fact, I was thinking, apparently this is just the retraining, and decided to see on which indices the class change occurred - I thought that near the end and this is just a good illustration of retraining.

In fact, it turned out like this

On the test sample

It turns out that this first thousand sheets (in the next sequence of adding to the model) are mostly unstable!

Surprised.

On the exam sample

Another fun fact, I was thinking, apparently this is just the retraining, and decided to see on which indices the class change occurred - I thought it was near the end and this is just a good illustration of retraining.

In fact it turned out like this

On the test sample

It turns out that it is the first thousand sheets (in the next sequence of adding to the model) that are mostly unstable!

Surprised.

On a sample

For all other trees, the teacher is the prediction error, i.e. (Y - Pred). And even with eta = 0.1...0.001. The influence of leaves of these trees is insignificant, they only correct. What you have shown (their insignificance).

GPT to teach )

Ok, let's add the Envelopes indicator to our analysis. The Envelopes indicator represents lines above and below a moving average. They are usually at a fixed percentage distance from that moving average.

Envelopes for the last month (November 2023):

Overall trend using RSI, Bollinger Bands and Envelopes:

Also, consider that signals from different indicators can be conflicting and it is important to analyse them together.

Let's continue with calculations and analysis.

Envelopes for the last month (November 2023):

Overall trend using RSI, Bollinger Bands and Envelopes:

Also, let's take into account that signals from different indicators can be conflicting, and it is important to analyse them together.

Let's continue with calculations and analysis.

You're counting boosting, aren't you?

You are quite right, we are talking about CatBoost!

There only the first tree is trained by the labels of the initial teacher.

For all other trees, the teacher is the prediction error, i.e. (Y - Pred).

Indeed, this is what the theory suggests.

Yes also with coefficient eta = 0.1...0.001

The "learning rate" coefficient, at least in CatBoost, is fixed for all trees.

The influence of the leaves of these trees is insignificant, they only correct. Which is what you have shown (their insignificance).

Can you actually explain how the leaf coefficients are arranged in CatBoost?

There are points I don't understand well.

However, I have demonstrated a change in "class" in the leaves, i.e. actually 40% of the leaves appeared to pull the totals the wrong way on the new data.

Can you actually explain how the coefficients are arranged to the leaves in CatBoost?

Would you like me to dig through the CatBoost code and give you the exact answer? I only dig up what I'm interested in. I don't use CatBoost.

Tutorial and simple boost code here https://habr.com/ru/companies/vk/articles/438562/This is the first time I've heard of leaf coefficients - what are they?

I report that on a separate sample test - 7467, and on exam - 7177, but there is not a small number of leaves with no activations at all - I did not count at once.

This is the distribution of leaves that changed class by their value for the test sample

and this is exam.

And this is the breakdown into classes - there are three of them, the third one is "-1" - no activation.

For the sample train

For test sample

For exam sample

In general, we can see that the leaf weights no longer correspond to the class logic - below is the graph from the test sample - there is no clear vector.

In general, this method approximates anything, but it does not guarantee the quality of predictors.

In general, I assume that the distinct "bars" on the graph above are very similar leaves by place and frequency of activation.

It is difficult to discuss what you do not know. Therefore, I can only be happy for your success. If I had such a method, I would use it :)

My method doesn't give such qualitative results yet, but it parallels well enough.

Have you ever wondered why this happens?

Testing speed of the model exported to naive code (catbust)

And exported to ONNX

The internals of the two versions of the bot are almost similar, the results are the same.

Would you like me to dig through the catbust code for you and give you an exact answer? I only dig into what I'm interested in. I don't use catbust.

Assumed you knew, but you don't - I didn't think to burden you.

This is the first time I've heard of leaf coefficients - what are they?

Leaf values that are summed to form the Y coordinate of a function.

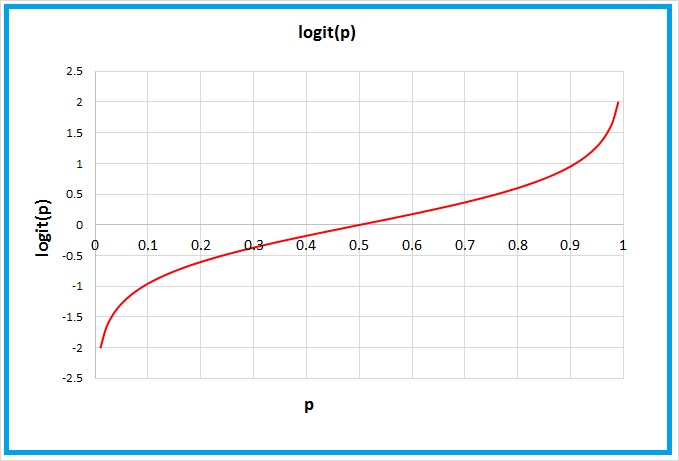

Greater than or equal to 0.5 in X means the default class is "1" in CatBoost.Have you ever wondered why this happens?

It's actually an erroneous pattern in the sheet. Therecould be a number of reasons why it's like that.

Or do you have a specific, unambiguous answer?